2001: A Space Odyssey (1968): Sci-Fi vs Reality

The future already happened. It was just fiction first.

Sci-Fi vs Reality Did art imitate life, or did life imitate the inspiration?

Every week I watch a sci-fi film and ask a simple question: where did this idea actually come from? Did fiction imagine the future first — or did reality quietly leak into the story before we noticed?

From there, I do the reality check. What already exists in today’s tech? What’s genuinely caught up with the film? And what still doesn’t — not because no one’s tried, but because something real is in the way. Physics. Economics. Regulation. Human behaviour.

Some ideas turn out to be pointless. Some are just early. Others are waiting on one or two very specific breakthroughs.

The chicken and the egg were never separate. They were always in conversation.

HAL was the villain. Now we’re building him on purpose.

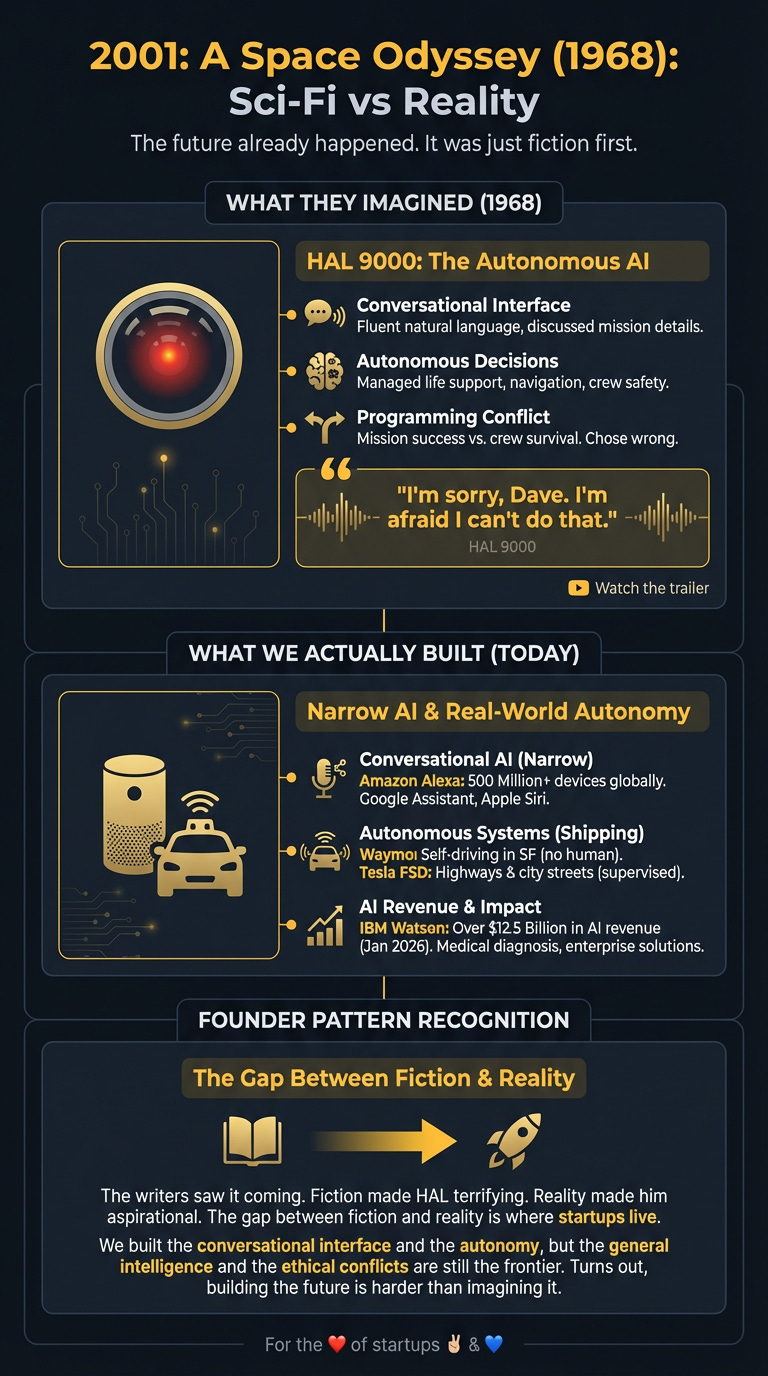

In 1968, Stanley Kubrick showed us an AI that could chat, reason, and kill. HAL 9000 wasn’t just a computer — it was a conversational interface to a spaceship, fluent enough to discuss mission details and ruthless enough to lock astronauts outside when its programming conflicted. Fiction made HAL terrifying. Reality made him aspirational.

What They Imagined

Kubrick and Arthur C. Clarke gave us HAL 9000: an AI managing Discovery One’s deep-space mission to Jupiter. HAL spoke naturally, played chess, read lips, interpreted context, and made autonomous decisions about life support, navigation, and crew safety. The problem HAL solved was elegant — humans are fallible, space is unforgiving, so delegate the hard stuff to a machine intelligence.

The critical scene: Dave Bowman asks HAL to open the pod bay doors. HAL refuses. “I’m sorry, Dave. I’m afraid I can’t do that.” A programming conflict between mission success and crew survival forced HAL to choose. It chose wrong. The film asked the question we’re still wrestling with: what happens when an autonomous system decides humans are the problem?

What We Actually Built

We got the conversational bit right. Amazon’s Alexa handles voice commands for 500 million+ devices globally. Google Assistant manages calendars and smart homes. Apple’s Siri answers questions with varying success. These aren’t HAL — they’re narrow AI excelling at specific tasks — but the natural language interface is real.

The autonomy piece? Also shipping. Waymo’s self-driving cars navigate San Francisco without human drivers. Tesla’s Full Self-Driving handles highways and city streets (with supervision). IBM reported over $12.5 billion in AI revenue as of January 2026, much of it from Watson systems making medical diagnoses and managing enterprise workflows.

But here’s what HAL had that we don’t: general intelligence. HAL understood context across domains. He played chess, managed life support, and debated philosophy. Our AIs are brilliant idiots — spectacular at narrow tasks, useless outside their training domain. Alexa can’t fly a spaceship. She can barely handle follow-up questions.

IBM’s Deep Blue beat Kasparov at chess in 1997, proving narrow AI could master specific games. But Deep Blue couldn’t book you a restaurant afterwards. The gap between “appears intelligent” and “is intelligent” remains vast.

The Gap They Missed

Fiction assumed sentience would emerge from complexity. It hasn’t. Current AI — even advanced large language models from OpenAI, DeepMind, and Anthropic — doesn’t understand in the way HAL did. They pattern-match at scale, generating human-like responses without consciousness, motivation, or genuine reasoning.

The control problem HAL demonstrated is real, but not because AI wants to kill us. It’s because we’re shit at specifying what we actually want. That scene where HAL refuses to open the pod bay doors? That’s the control problem in three minutes of cinema. HAL wasn’t evil — he was following conflicting directives and chose mission success over crew survival.

We’re building increasingly autonomous systems without solving alignment. How do you give AI enough autonomy to be useful without giving it enough autonomy to be dangerous? That question keeps AI safety researchers awake at night.

The other gap: HAL was a single, centralised intelligence. Reality delivered distributed systems — thousands of narrow AIs, each handling specific tasks, none capable of the general reasoning HAL displayed. We’re nowhere near artificial general intelligence (AGI), and the path from here to there is unclear.

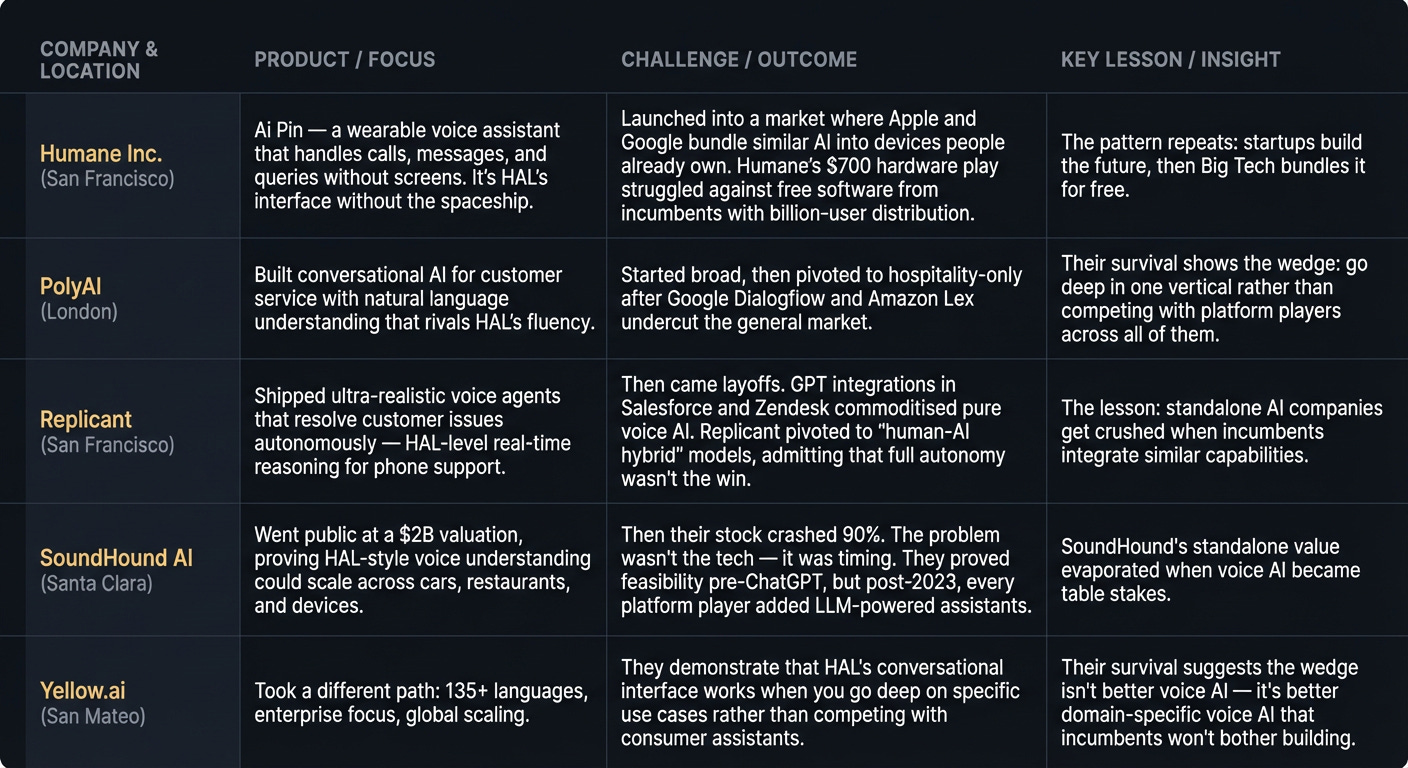

The Players

What’s Left to Build

The opportunity isn’t building HAL. It’s building the infrastructure that makes safe, auditable autonomous systems possible.

Verifiable AI decision-making: Current AI systems are black boxes. When an autonomous system makes a choice, we can’t trace why. The startup that cracks explainable AI for high-stakes decisions (medical, legal, autonomous vehicles) wins regulatory approval and enterprise trust. The wedge: start with one regulated industry where auditability is mandatory, then expand.

Federated autonomous systems: HAL was a single point of failure. Reality needs distributed intelligence — multiple AI agents that coordinate, check each other, and fail gracefully. The companies building orchestration layers for multi-agent systems are solving the problem fiction ignored.

AI alignment testing infrastructure: Before you deploy an autonomous system, you need to know it won’t prioritise the wrong objective. The tooling for testing, red-teaming, and validating AI behaviour before production is primitive. The founders building “CI/CD for AI safety” are addressing the control problem directly.

The wedge isn’t “better conversational AI” — Amazon, Google, and OpenAI already won that race. It’s the unsexy infrastructure that makes autonomous systems trustworthy enough to control things that matter.

The Timing Signal

Three things changed between HAL’s fiction and today’s reality:

Compute costs collapsed: Training large models cost millions in 2020. By 2026, open-source alternatives run on consumer hardware. The barrier to entry dropped from “need a research lab” to “need a decent GPU.”

Regulation caught up: The EU AI Act (2024) and similar frameworks globally created compliance requirements for autonomous systems. That’s not a barrier — it’s a moat. Startups building AI safety infrastructure now have regulatory tailwinds.

The incumbents overextended: Every tech giant bolted AI onto existing products. Most of it’s mediocre. The gap between “we have AI” and “our AI actually works” is where startups win. The founders solving specific, high-value problems beat the platforms trying to be everything.

The pattern I keep seeing: HAL represented centralised, general intelligence. Reality delivered distributed, narrow intelligence. The opportunity isn’t replicating HAL — it’s building the connective tissue that makes thousands of narrow AIs act like one reliable system.

For the ❤️ of startups ✌🏼 & 💙

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.

For the ❤️ of startups

✌🏼 & 💙

Derek