Domain Histories: Agentic Systems & Automation

The best founders are students of history

This is Domain Histories — a weekly series mapping startup domains from first principles. The best founders are students of history. Patrick Collison met Visa's founder before building Stripe. Apoorva Mehta studied Webvan's collapse before building Instacart. They used history to make specific decisions about what to build, what to avoid, and when to move. Each issue takes one domain and gives you the full map: who tried before, why they failed, where the money sits, what's shipping now, and what a founder entering today should do differently because of it. Not a market overview. Not a research report. The history that changes what you build.

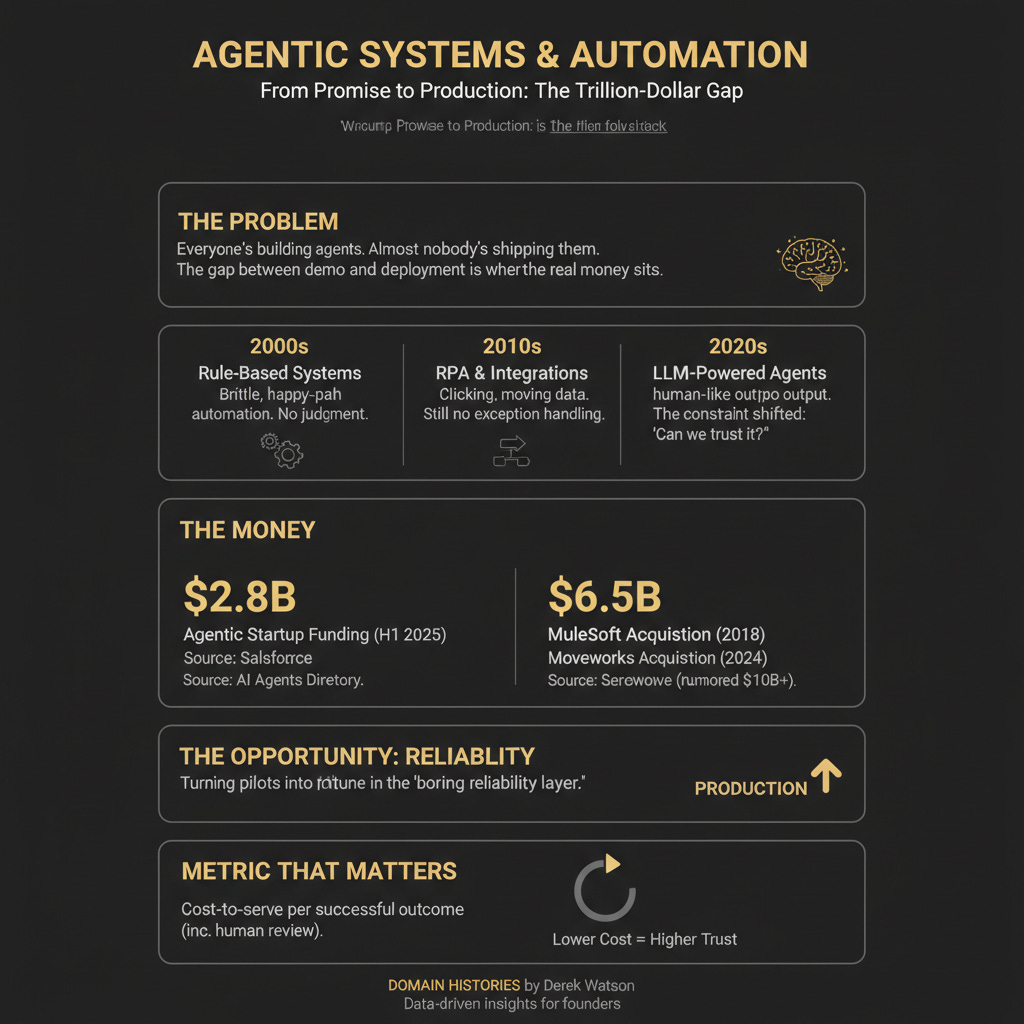

Everyone’s building agents. Almost nobody’s shipping them to production. The gap between demo and deployment is where the real money sits. $2.8B flowed into agentic startups in H1 2025 alone — but most of that capital chases wrappers. Your pitch is: we sell the boring reliability layer that turns pilots into production. We’ve been here before. Just with worse technology and different buzzwords.

The Original Problem

Businesses have always paid humans to do repetitive work that computers should handle. The problem wasn’t recognising this — it was building systems reliable enough to trust with real operations. Early automation was brittle. Rule-based systems broke the moment reality deviated from the script. Robotic Process Automation (RPA) could click through SAP screens, but couldn’t handle exceptions. Integration platforms could move data between systems, but couldn’t decide what to do with it. The constraint was intelligence. You could automate the happy path. You couldn’t automate judgement. LLMs changed that constraint. Suddenly you had systems that could read unstructured data, make decisions based on context, and generate outputs that looked human. The question shifted from “can we automate this?” to “can we trust it?” That’s where most founders are stuck today. Building an agent that works in a demo is easy. Building one that won’t bankrupt your customer when it hallucinates is the actual product.

The Numbers

Funding is flowing, but market sizing is noisy. The only number that matters early: cost-to-serve per successful outcome, including human review.

The acquisition numbers tell the story better than market projections. Incumbents are paying billions for distribution and trust. If you’re building in this space, that’s your exit benchmark — or your competition.

The Timeline

Era 1: Rule-Based Automation (1990s–2010s)

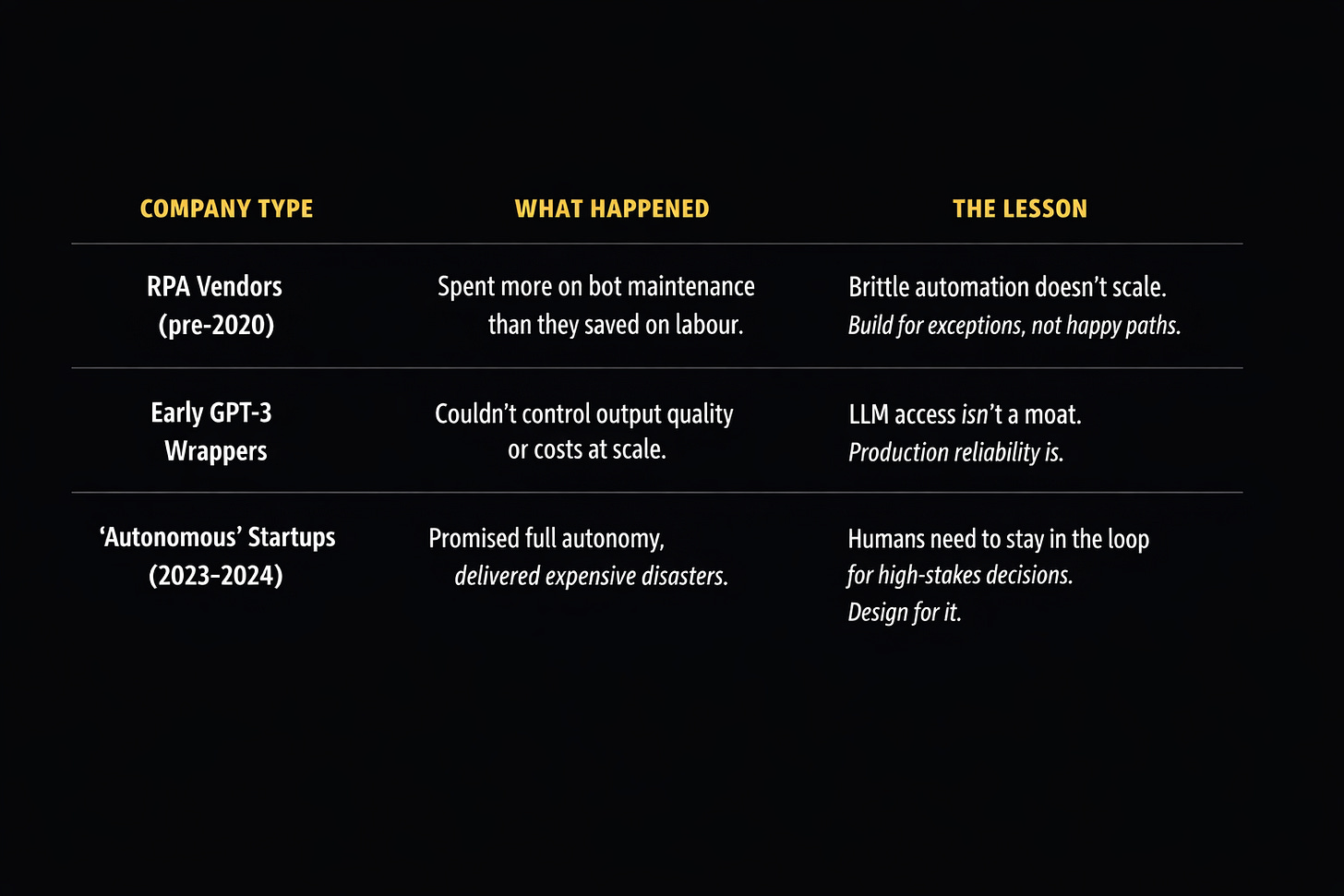

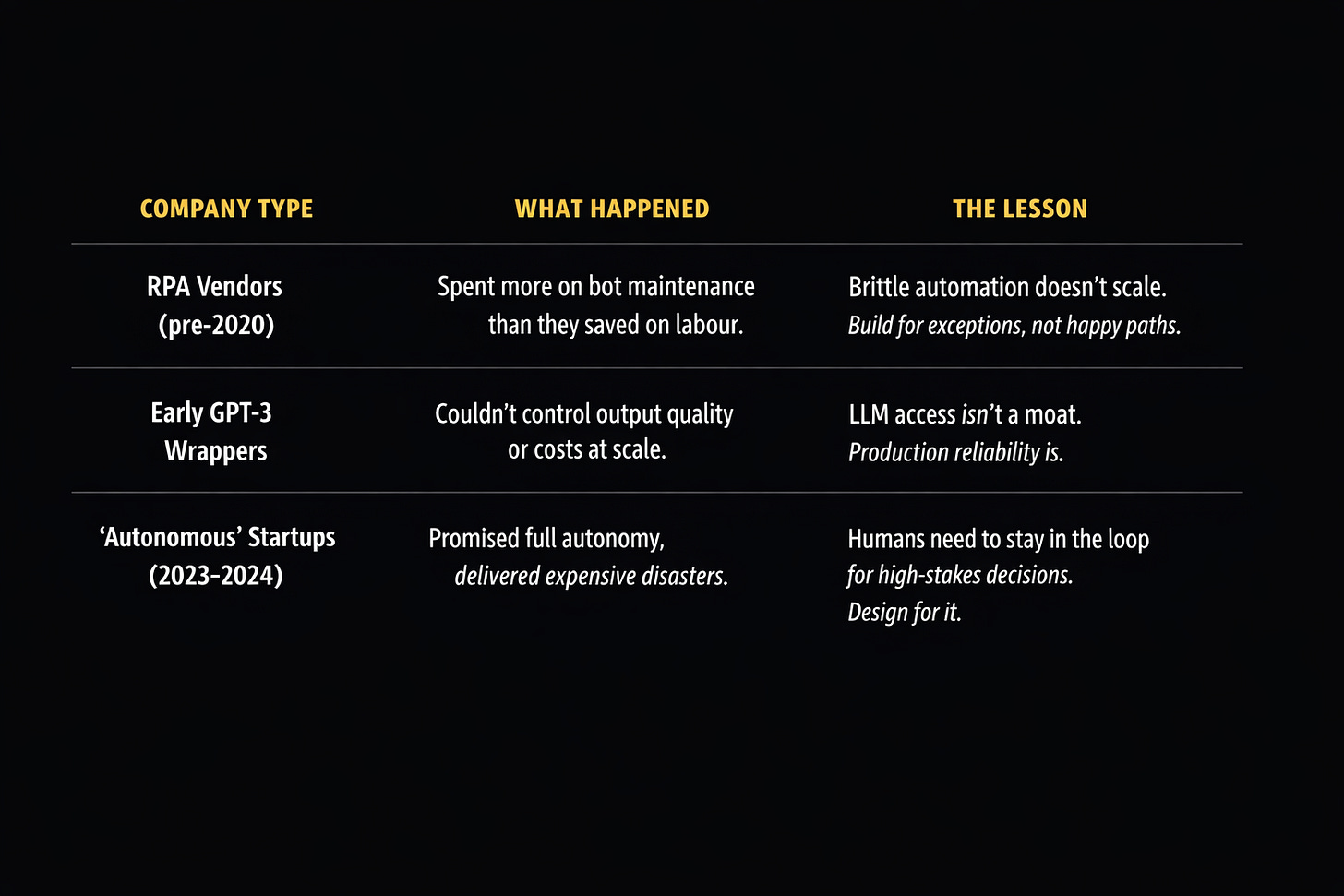

What enabled it: GUIs, APIs, enterprise software standardisation. The first wave was about replacing mouse clicks. RPA tools like Blue Prism and UiPath sold “digital workers” that could log into systems, extract data, and update records. The pitch was simple: automate the boring stuff your analysts hate doing. The playbook: identify high-volume, low-complexity tasks. Build bots that mimicked human actions. Deploy them in controlled environments where exceptions were rare. Why it ended: Brittleness. These systems broke constantly. A UI update would kill your bot. An unexpected error message would leave it stuck in a loop. You needed armies of bot maintainers. The promised ROI evaporated in maintenance costs. Blue Prism went public at £1.6B in 2016, then sold to SS&C for £1.1B in 2022 after maintenance costs crippled their margins. Banks were spending more on bot babysitters than they saved on headcount. What it means today: If your agent can’t handle the thousand edge cases that break your system at 3am, you’re building RPA with better PR.

Era 2: Integration Platforms (2010s–2020s)

What enabled it: Cloud APIs, Zapier-style workflow builders, microservices. The second wave moved from UI automation to API-first integration. Tools like Workato and Tray.io let you connect systems without brittle screen scraping. The pitch evolved: don’t automate actions, automate workflows. Key exit: MuleSoft, acquired by Salesforce for $6.5B in 2018. The playbook: build visual workflow builders. Let business users create integrations. Charge based on tasks executed. Why it ended: Still no intelligence. You could move data between systems, but you couldn’t make decisions about that data. Every conditional required manual configuration. Complex workflows became unmaintainable spaghetti. What it means today: Your agent needs to do more than connect APIs. If a human still has to configure every decision path, you haven’t solved the problem.

Era 3: Early AI Agents (2020–2023)

What enabled it: GPT-3, function calling, vector databases. The third wave added reasoning. LLMs could understand natural language instructions, decide which tools to use, and chain actions together. The pitch shifted again: autonomous AI that can figure out what to do. The playbook: wrap LLMs with tool access. Let them call APIs, query databases, and execute code. Market it as “AI that takes action.” Why it stumbled: Demos worked brilliantly. Deployments struggled with hallucinations, costs, and security. Few have solved it in a repeatable, self-serve way. What it means today: This is where most founders are right now. You’ve built something impressive. You can’t ship it because you don’t know what it’ll do under load.

Era 4: Production-Grade Agentic Systems (2024–Present)

What enabled it: Better models (GPT Claude Gemini and nemours open source models), structured outputs, observability tools, human-in-the-loop patterns. This is the era we’re in. The technology works. The challenge is reliability. Smart companies aren’t building fully autonomous agents. They’re building systems with guardrails: structured outputs that force valid responses, confidence scoring that flags uncertain decisions, human-in-the-loop workflows that catch errors before they propagate. Key signal: Moveworks, acquired by ServiceNow for $2.85B. Incumbents aren’t building this from scratch — they’re buying it.

The playbook: start with high-value, low-risk use cases. Build observability from day one. Design for human oversight. Charge based on outcomes, not API calls. What it means for founders: Your differentiation isn’t the LLM. It’s the boring infrastructure that makes it safe to deploy.

The Production Wall

Most failures in this space don’t make headlines because companies pivot before they die. But the patterns are clear:

The real graveyard is quieter: internal projects at enterprises that got killed after six months because they couldn’t move from pilot to production. That’s where most of the learning is happening. Red flags for week one:

Can your agent explain every tool call? If not, you can’t debug.

Can it be rate-limited per user? Unlimited spend isn’t production-ready.

What’s your rollback path when it writes bad data? No undo, no deploy.

Where The Money Sits

The margins live between layers 2 and 3. Frameworks are commoditised. Applications are crowded. But orchestration — the boring infrastructure that routes Agent A’s output to Agent B’s input, handles rollback, manages cost — that’s where you can charge enterprise prices because nobody’s solved it yet. The value chain:

Foundation models (OpenAI, Anthropic, Google)

Agent frameworks (LangChain, CrewAI, AutoGPT)

Orchestration platforms ← the gap

Application layer (customer service, sales, operations)

Incumbents are buying in. ServiceNow paid $2.85B for Moveworks. Salesforce paid $6.5B for MuleSoft. They’re buying distribution and trust to embed agentic capabilities into existing platforms. They’ll own enterprise deployment within 24 months unless you can crack mid-market self-serve first. The blind spot: Mid-market companies with 100–1,000 employees. Too complex for Zapier. Too small for ServiceNow. Desperate for automation they don’t need a systems integrator to deploy. The tax everyone pays: LLM API costs, observability tooling, and human oversight. Your margin is what’s left after those three. Most founders underestimate the last one.

What’s Actually Hard

Reliability isn’t sexy, but it’s the entire product. Your agent needs to work 99.9% of the time, not 95%. That last 4.9% is where you’ll spend 90% of your engineering time. Observability from day one. You can’t debug what you can’t see. When an agent makes a bad decision, you need to trace exactly why: which context it used, which tools it called, what the model returned. Most founders bolt this on later. It’s too late. Cost control under adversarial conditions.

A founder we know watched their agent get tricked into a loop that called GPT-4 8,000 times in an hour — $3,200 gone before the alert fired. Production means rate limits, circuit breakers, and assuming users will try to break you. Human-in-the-loop isn’t a fallback — it’s the design.

The best systems don’t try to eliminate humans. They route decisions to humans when confidence is low, and learn from those decisions to improve. Building this feedback loop is harder than building the agent. Multi-step reliability compounds. An agent that’s 95% accurate per step is 77% accurate over three steps. By step ten, you’re at 60%. You need much higher per-step reliability than you think.

What’s Shipping Now

The companies actually deploying agents in production are doing boring, specific things:

Customer service triage: Agents that read support tickets, categorise them, pull relevant context, and draft responses for human review. Not fully autonomous — augmented.

Sales research: Agents that monitor buying signals, enrich lead data, and flag opportunities. The human still closes the deal.

Code review: Agents that scan PRs for security issues, style violations, and potential bugs. The developer still approves the merge.

Data entry: Agents that extract structured data from unstructured sources (emails, PDFs, forms) and populate systems. With human spot-checks.

Notice the pattern? Real deployments keep humans in the loop for the final decision. The agent does the grunt work. The human does the judgement.

Where This Goes

Consolidation around production-grade platforms. The framework wars will end. Winners will be the ones that solve observability, cost control, and reliability. Agentic systems will merge with existing workflow automation.

You won’t “buy an AI agent.” You’ll upgrade your CRM, and it’ll come with agents built in. ServiceNow‘s Moveworks acquisition is the blueprint. Assume distribution will be bundled into incumbent suites. Build for plug-in deployment and procurement from day one. Don’t start multi-agent. Start single-agent with strict contracts. Your roadmap to multi-agent: (a) typed tool interfaces, (b) shared state store with versioning, (c) conflict resolution policy.

Moves Worth Stealing

Start with the audit trail, not the agent. Before you build autonomy, build the infrastructure to understand every decision your system makes. This becomes your differentiation when you’re selling to enterprises.

Charge for outcomes, not API calls. The companies winning right now are the ones that absorb the LLM cost risk and charge based on results. This forces you to optimise for reliability, not features.

Design for the escalation path. When your agent hits uncertainty, what happens? The best systems route to humans seamlessly, learn from their decisions, and get better over time. Build this workflow from day one, not as an afterthought.

The Why Now

Three things changed:

Models got reliable enough. GPT and Claude don’t hallucinate as much. Structured outputs give you guarantees. Function calling actually works.

Enterprises got desperate. Labour costs are up. Hiring is hard. Productivity is flat. Companies that wouldn’t touch AI two years ago are now mandating it.

The infrastructure exists. Observability tools, vector databases, orchestration frameworks — the boring stuff that makes agents production-ready is finally available. You don’t have to build it from scratch.

The window is open. But it won’t stay open forever. The incumbents are buying their way in. If you’re starting today, you’re racing against both startups and incumbents. The startups have velocity. The incumbents have distribution. You need both.

If You’re Starting Monday

The wedge: Pick one workflow in one vertical where humans do repetitive judgement work. Customer service ticket routing. Sales lead qualification. Code review. Not “AI for everything” — one specific thing you can ship in three months. The trap: Building for full autonomy. You’ll waste a year trying to eliminate humans from the loop. Design for augmentation instead. The agent handles the grunt work. The human handles edge cases. Ship that.

The customer: Mid-market companies (100–1,000 employees) in regulated industries. They have the budget and the pain, but they can’t afford multi-year enterprise implementations. They need something that works out of the box with minimal customisation. The first hire: Not a prompt engineer. An infrastructure engineer who’s built production systems at scale.

The LLM is the easy part. Reliability is the product. Week 1 ship list:

(i) eval harness with 200 real cases,

(ii) tool-call logging + replay,

(iii) per-tenant spend caps,

(iv) write-path staging + undo,

(v) escalation queue with SLAs.

Start with something boring that works. Scale from there. The companies trying to build AGI will run out of money. The companies solving real problems will print it.

Go Deeper

Agentic AI Venture Funding: $2.8B in H1 2025 — Where the money’s going and what VCs are betting on

ServiceNow’s $2.85B Moveworks Acquisition — What it signals about incumbent strategy in agentic AI

AI Startup Funding Trends (Crunchbase) — Broader AI investment context and where agentic fits

2025 State of Generative AI in the Enterprise (Menlo Ventures) — Enterprise adoption patterns and the pilot-to-production gap

Top AI Agent Startups by Funding — Market sizing and the key players

For the ❤️ of startups

Arthur is the AI native startup operating system I’m building in public — not hype, but a system that turns input into structured execution and tracks founder progress. If you want to follow or use it, it’s open for early access.

→ Access Arthur

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you’ve got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I’m keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe and follow me on LinkedIn or Twitter to never miss an update.