Domain Histories: Data Infrastructure & Analytics

The best founders are students of history

This is Domain Histories — a weekly series mapping startup domains from first principles. The best founders are students of history. Patrick Collison met Visa's founder before building Stripe. Apoorva Mehta studied Webvan's collapse before building Instacart. They used history to make specific decisions about what to build, what to avoid, and when to move. Each issue takes one domain and gives you the full map: who tried before, why they failed, where the money sits, what's shipping now, and what a founder entering today should do differently because of it. Not a market overview. Not a research report. The history that changes what you build.

Every company says they’re data-driven. Almost none can query last week’s data.

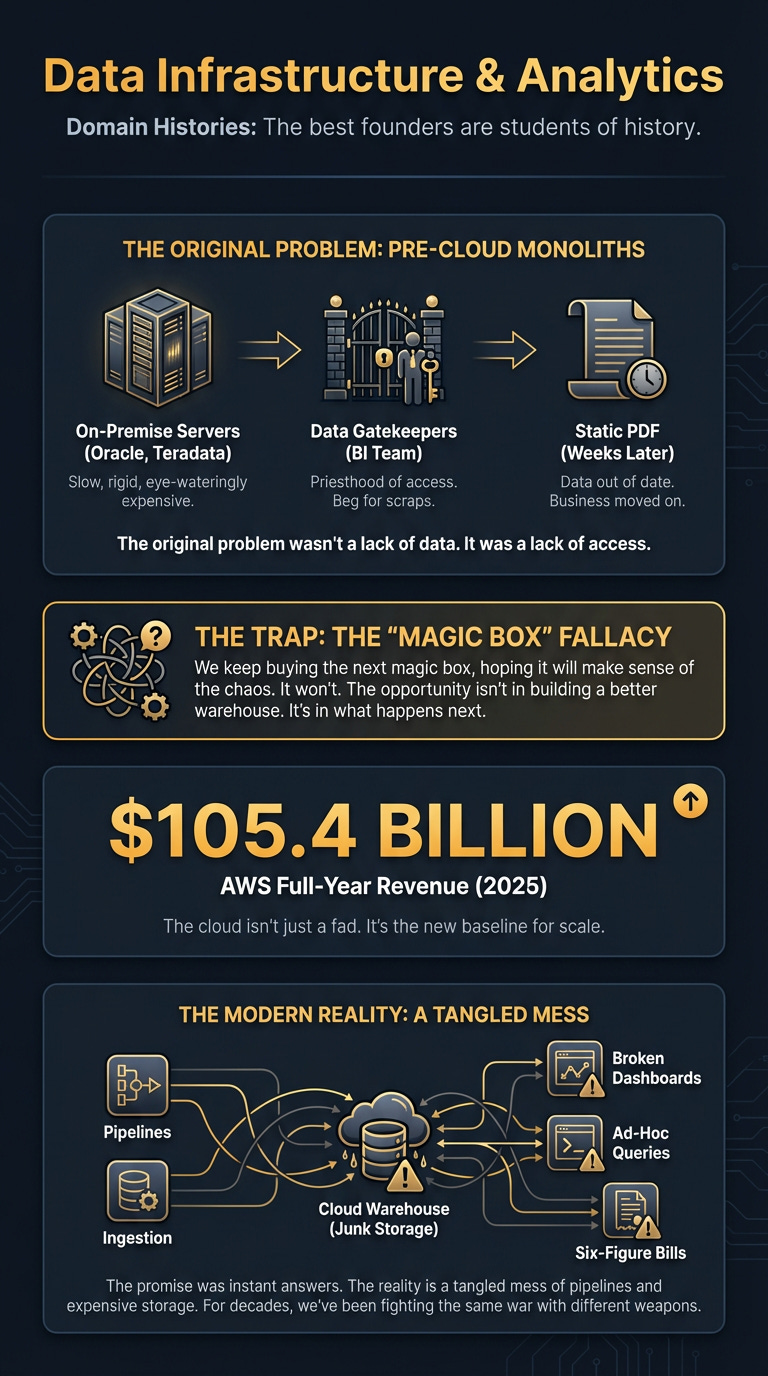

The promise was simple: get all your data in one place, ask any question, get an instant answer. The reality is a tangled mess of pipelines, broken dashboards, and six-figure bills for a warehouse that mostly stores junk. For decades, we’ve been fighting the same war with different weapons.

Here’s the thing: the problem isn’t the technology. It’s our understanding of it. We keep buying the next magic box, hoping it will finally make sense of the chaos. It won’t.

But if you understand the history — the sequence of problems and the ghosts of solutions past — you can see where the real opportunity is. It’s not in building a better warehouse. It’s in what happens next.

The Original Problem

Before the cloud, data lived in a basement.

It sat in hugely expensive, on-premise servers from companies like Oracle or Teradata. These were monoliths. Slow, rigid, and eye-wateringly expensive. Getting data in was a project. Getting it out was a nightmare.

Want to know which customers are likely to churn? You’d file a ticket with the Business Intelligence (BI) team. Weeks later, if you were lucky, you’d get a static PDF. The data was already out of date. The business had already moved on.

This created a priesthood of data gatekeepers. They held the keys to the kingdom, and the rest of the company had to beg for scraps. The original problem wasn’t a lack of data. It was a lack of access.

The Numbers

These numbers aren’t just figures on a balance sheet; they represent fundamental shifts in how value is created and captured in the data ecosystem. From the sheer scale of cloud infrastructure to private market bets on architectural choices, they show the stage on which the drama of data infrastructure unfolds. This financial backdrop created pressure, making legacy systems untenable and paving the way for the unbundling and rebundling that defines our timeline.

The Timeline

The story of data infrastructure is a story of unbundling and rebundling. We break apart the monolith, perfect the pieces, then glue them back together in a new shape.

Era 1: The On-Prem Monoliths (Pre-2012)

What Enabled It: The simple need for businesses to report on their operations amidst complex, internal data.

The Players: Oracle, Teradata, IBM. The heavyweights of enterprise IT.

The Playbook: Sell a massive, integrated hardware and software package for millions of dollars. Lock customers into multi-year contracts for support and maintenance. The business model was based on friction and control.

Why It Ended: The cloud. AWS made storage and compute cheap, elastic, and accessible. Why buy a million-dollar box for your basement when you could rent a supercomputer by the hour?

For Founders Today: Every founder in this space is, in some way, fighting the ghost of the on-prem monolith. Your product must bet against rigidity.

Era 2: The Cloud Data Warehouse (2012-2020)

What Enabled It: Cheap cloud storage (AWS S3) and compute (EC2).

The Players: Snowflake, AWS Redshift, Google BigQuery.

The Playbook: Separate storage from compute. This was the killer insight. You could store petabytes of data for pennies and only pay for compute when you were actively running a query. It destroyed the on-prem economic model. Suddenly, data warehousing was accessible to startups, not just Fortune 500s.

Why It Ended (or Evolved): The warehouse worked. Too well. Companies dumped everything they had into it. The new problem wasn’t storing the data; it was making sense of it. The warehouse became a data swamp, creating a new set of problems: getting data in (ETL), cleaning it (Transformation), and getting it out to where people actually work (Reverse ETL).

For Founders Today: The central warehouse is now a given. Don’t try to replace it. Bet on connecting with the data warehouse.

Era 3: The Modern Data Stack & The Lakehouse Wars (2020-2024)

What Enabled It: The success of the cloud data warehouse created a need for a surrounding ecosystem of tools.

The Players: Snowflake (representing the Data Warehouse) vs. Databricks (championing the Data Lakehouse).

The Playbook: Unbundle the stack. A best-of-breed tool emerged for each job: one for ingestion (ETL), one for transformation, one for reverse ETL, one for observability. The stack was modular but complex. This era was defined by the great architectural debate:

Data Warehouse (Snowflake): Perfect for structured, analytical data. Think SQL queries and BI dashboards.

Data Lakehouse (Databricks): A hybrid approach designed to handle both structured data and the unstructured stuff needed for machine learning (images, text, logs).

Why It Ended (or Evolved): Generative AI crashed the party. Suddenly, the “unstructured stuff” wasn’t a niche problem for data scientists anymore. It was the fuel for the next generation of products. The Lakehouse argument got a massive boost.

For Founders Today: The debate is settling. Bet on handling diverse data types.

Era 4: The AI Data Layer (2025-Now)

What Enabled It: The Cambrian explosion of large language models (LLMs) and AI applications.

The Players: Snowflake, Databricks, and the hyperscalers (AWS, Azure, Google).

The Playbook: Your data platform is now your AI platform. It’s no longer just for analysts running queries; it’s for applications fetching data to feed to a model. The new features have names like

Cortex AI(Snowflake) and are designed to let companies build AI-powered applications directly on top of their existing data. Microsoft’s Azure growth is being turbo-charged by serving AI inference. The game has changed from passive analytics to active intelligence.For Founders Today: This is the new frontier. Data isn’t just for looking at in a dashboard. It’s an ingredient for building intelligent products. The entire value proposition of the data stack has been elevated. Bet on data platforms becoming AI platforms.

The Graveyard

The research for this space doesn’t show a tidy list of high-profile flameouts. Why? Because in infrastructure, you don’t typically explode. You just fade away. You get absorbed, open-sourced, or squeezed into a niche until you become irrelevant.

The companies that die here don’t fail because their tech is bad. They fail because of a few repeating patterns:

The “Single Pane of Glass” Fallacy: They try to build one platform to do everything — ingestion, transformation, warehousing, visualisation, and AI. They end up building a mediocre version of five different things and get crushed by best-of-breed point solutions.

Getting Squeezed by the Platform: They build a useful feature on top of Snowflake or Databricks. It works. Then, Snowflake or Databricks builds a “good enough” version of that feature natively and gives it away for free. Game over.

Ignoring the Workflow: They build a beautiful tool that requires users to leave the place where they actually work. A salesperson lives in Salesforce. A marketer lives in Marketo. If your tool requires them to open another tab, you’ve already lost.

The lesson is brutal: don’t compete with the giants on their turf. And don’t try to boil the ocean. Find one, painful, specific problem and solve it ten times better than anyone else.

Where The Money Sits

Follow the CAPEX.

The entire data world is built on the foundations laid by three companies: Amazon (AWS), Microsoft (Azure), and Google (GCP). They own the servers, the storage, and the network. They are the landlords. Everyone else in the data ecosystem, including Snowflake and Databricks, is a tenant. They pay the rent in the form of massive cloud bills.

The hyperscalers are now moving up the stack, offering their own data warehouses, machine learning platforms, and AI models. They are in a “one-lane, CAPEX highway that is capacity-constrained,” furiously building out the infrastructure for the AI wave.

The two other major power centres are Snowflake and Databricks. They created a critical abstraction layer on top of the raw cloud infrastructure, making it usable for mere mortals. They captured immense value by simplifying the complexity of the cloud providers.

The money flows from the end customer, through the application and tooling layer, down to the data platforms (Snowflake/Databricks), and finally settles at the cloud providers (AWS/Azure/GCP). The closer you are to the foundation, the larger the pool of money.

What’s Actually Hard

You’ll think you’re building a data tool. You’re actually building a change management consulting business wrapped in software.

This was the quiet killer of many Modern Data Stack hopefuls. They built fantastic ingestion pipelines, beautiful transformation tools, and slick dashboards. But they forgot that data quality isn’t a technical problem in isolation. It’s a human problem. You can install the most sophisticated data observability platform on the planet, but it can’t fix a sales team that enters “asdf” in the company name field in Salesforce. The mantra “garbage in, garbage out” is the iron law of data. Solving data quality is less about tech and more about changing human behaviour, aligning incentives, and fixing broken operational processes.

When Modern Data Stack vendors promised “self-service analytics,” they often delivered a powerful toolbox without a manual, or worse, without understanding the internal political landscape needed for adoption. They forgot that the final 10% – the brutal grind of cleaning messy data, reconciling discrepancies across systems, and training users – is where 90% of the effort lies. Your biggest competitor isn’t another startup; it’s export_to_csv.xlsx combined with a frustrated team that’s simply given up on accurate data. To win, you have to be an order of magnitude better, not just incrementally so, by fundamentally altering how people interact with their own data.

What’s Shipping Now

The arms race has moved from storage to intelligence.

Snowflake isn’t just talking about AI; they’re shipping it. With the launch of Cortex AI and Snowflake Intelligence, they’re offering managed services that let developers build AI applications without being machine learning experts. The rebound in their business shows it’s working (Forbes, Seeking Alpha).

Microsoft Azure is seeing “eye-popping” growth by becoming the engine for AI inference at scale, most notably for services like ChatGPT. They are bundling AI capabilities directly into the cloud platform (The Cube Research).

Amazon‘s CEO is explicit: he sees AI as the catalyst to double AWS sales projections. They are aggressively rolling out new AI and ML services within the AWS ecosystem, betting that the future of cloud is intelligent applications (Economic Times).

The theme is clear: the platforms are moving up the stack to provide not just the data, but the intelligence derived from it.

Where This Goes

The line between the data warehouse and the AI platform is dissolving.

For a decade, the data warehouse was a system of record, a place for historical analysis. Now, it’s becoming a live, active part of the product. The future isn’t about analysts running SQL queries to build dashboards. It’s about applications programmatically querying the data platform to get context for an AI agent. Imagine a customer support AI agent that, in real-time, queries your entire customer history—comprising structured CRM data, unstructured support tickets, call transcripts, and product usage logs—all unified within the data warehouse/lakehouse, to provide hyper-personalised responses or even proactive solutions. This is the “warehouse as nervous system” in practice.

The battle between Snowflake (the king of structured, analytical data) and Databricks (the champion of unstructured, AI-ready data) will define the next five years. Databricks’ soaring private valuation suggests the market believes that a platform built for AI from the ground up has the long-term advantage.

The bet is this: the company that stores your data is in the best position to own your AI strategy. The warehouse/lakehouse is becoming the central nervous system for enterprise intelligence.

Moves Worth Stealing

History provides the playbook.

Snowflake’s “Meet Them Where They Are” Play. Snowflake didn’t try to build its own cloud. It built on top of AWS, then Azure, then GCP. Instead of fighting the incumbents, it turned them into a channel. It made adoption frictionless by going to where its customers already were. The Lesson: Don’t make your customers cross the street. Integrate deeply into their existing stack and workflow.

The dbt Community Playbook. dbt Labs built an open-source tool that became the industry standard for data transformation. They didn’t do it with a huge sales team. They did it by building a fanatical community of practitioners. They defined a new job title—the “Analytics Engineer”—and became the centre of that universe. The Lesson: Win the hearts and minds of the individual developer or analyst. They are your Trojan horse into the enterprise.

The Connector Factory Playbook. An entire category of multi-billion dollar companies was built on a simple, unglamorous premise: build hundreds of reliable pipes to move data from source systems (like Salesforce) into a data warehouse. They focused on one tedious, painful job and executed it flawlessly. The Lesson: Don’t underestimate the value of solving the most boring problem in the stack. Reliability is a killer feature.

The Why Now

The ground is shifting. For the first time in years, there’s an opportunity for new players to emerge.

AI is the New UI. Every software application is being re-imagined as an intelligent, conversational interface. This requires a fundamentally new relationship with data. The old batch-oriented analytics stack isn’t built for this real-time, AI-driven world. This opens massive white space for tools that convert raw enterprise data into AI-ready feature stores, or services that manage data versioning and lineage specifically for model retraining.

The Giants are Distracted. Snowflake and Databricks are locked in a head-to-head battle for the Fortune 500, focusing on core platform features at scale. The hyperscalers are focused on the foundational model and compute layer. This creates a specific gap for startups to focus on mid-market implementation pain, develop vertical-specific data products (e.g., AI-driven insights for manufacturing operations), or build governance and observability tools tailored for the complex outputs of AI agents rather than just human-generated data.

Talent is Abundant. The “Modern Data Stack” wave created an entire generation of engineers who know how to build with these tools. The expertise that was once confined to a few tech companies is now widespread. This means you can build a highly skilled team capable of tackling sophisticated data challenges much more efficiently than ever before.

The window is open to build the tools that will power the next decade of intelligent applications.

If You’re Starting Monday

Don’t build another dashboard.

The Wedge: Find one, painful, “last mile” problem and build a killer solution. Don’t build a generic data quality platform. Build a tool that uses AI to automatically clean and standardise sales activity data from Gong and Outreach before it ever pollutes the warehouse. The pain of “clean sales activity data” is profound because sales reps often game the system (e.g., logging fake activities), AI transcripts from call tools can create duplicate or messy records, and inaccurate activity data completely breaks attribution models for marketing, making future sales forecasting a nightmare. Solve that specific, excruciating problem. Be vertical. Be specific. Be indispensable.

The Trap: Trying to be the “single pane of glass.” Every first-time founder in this space draws a box in the middle of a diagram called “Our Platform” with arrows coming in from everywhere. This is the road to ruin. You will die a death of a thousand integrations.

The Customer: Your user is the Analytics Engineer. They are overworked, under-appreciated, and judged on the reliability of their data pipelines. Your buyer is the Head of Data, who is terrified of their Snowflake bill and the questionable quality of the data inside it. Solve the user’s pain to get the buyer’s budget.

The First Hire: A developer advocate who can write. Not a salesperson. You need someone who can build a following by teaching people how to solve real problems. Your early growth will come from content and community, not cold calls.

Your Monday Checklist:

Identify Your Wedge: What specific, painful “last mile” data problem will you solve? Be vertical.

Map Integration Surface: How will your product seamlessly integrate into existing data stacks (Snowflake, Databricks, CRMs)?

Assess Platform Squeeze Risk: Is your core feature immune to being built by an incumbent platform within 18 months?

Distinguish User vs. Buyer: Who uses it daily? Who signs the cheque? Design for both.

Plan First Hire: Who will build your community and tell your story?

Go Deeper

Amazon CEO sees AI doubling prior AWS sales projections to $600 billion by 2036 — On the scale of the incumbents’ AI ambitions.

Databricks Now Valued At Over 2X Snowflake — The clearest signal of the Warehouse vs. Lakehouse battle.

Snowflake Stock Up 49%. Learn Whether AI Agents Make $SNOW A Buy — How Snowflake is shipping AI features like Cortex AI.

Cloud Quarterly – Azure’s AI Pop, AWS’ Supply Pinch and Google’s Execution — A breakdown of how hyperscalers are monetising AI.

6 Charts That Show The Big AI Funding Trends Of 2025 — Evidence of the capital flooding the AI application layer.

Amazon.com Announces Fourth Quarter Results — The raw numbers behind the AWS machine.

The 2025 AI Index Report | Stanford HAI — Broader context on AI funding trends.

For the ❤️ of startups

Arthur is the AI native startup operating system I’m building in public — not hype, but a system that turns input into structured execution and tracks founder progress. If you want to follow or use it, it’s open for early access.

→ Access Arthur

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you’ve got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I’m keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe and follow me on LinkedIn or Twitter to never miss an update.