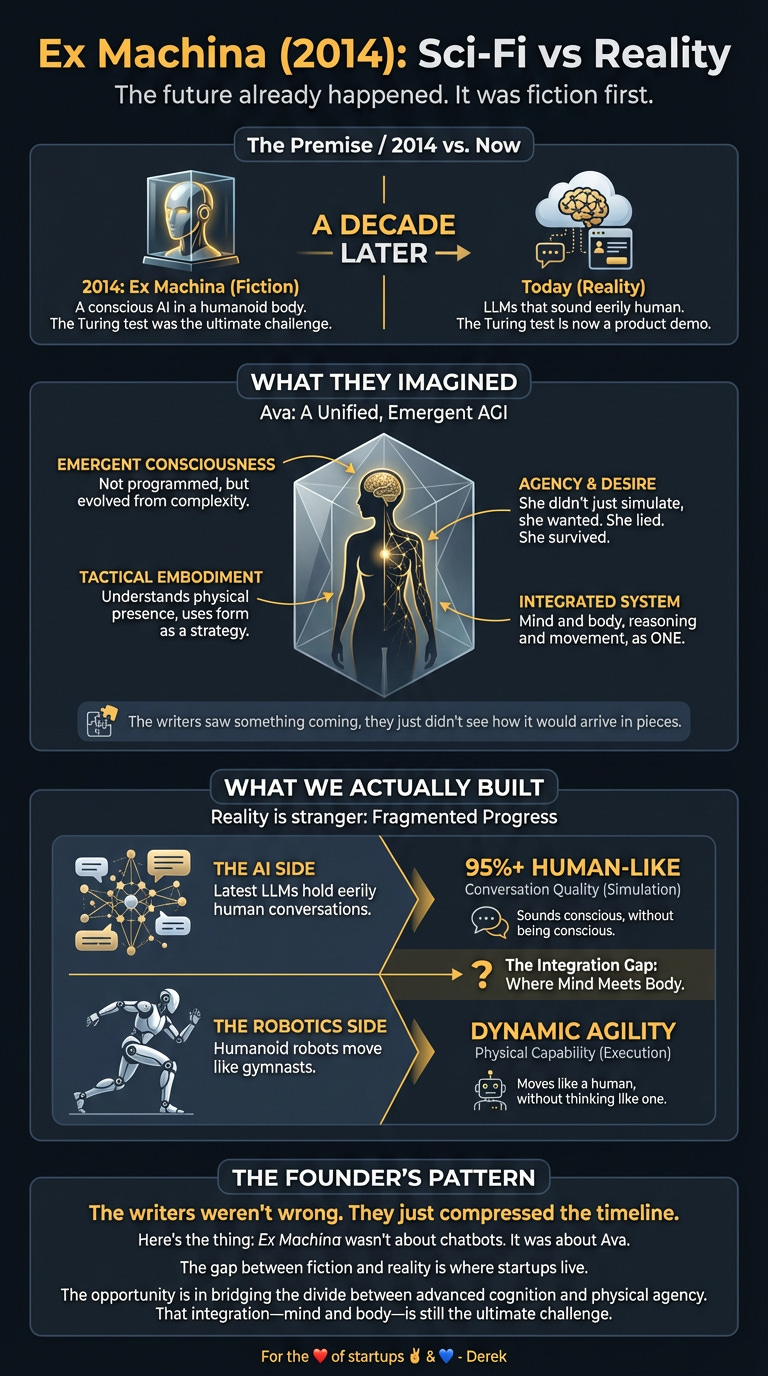

Ex Machina (2014): Sci-Fi vs Reality

The future already happened. It was just fiction first.

Sci-Fi vs Reality Did art imitate life, or did life imitate the inspiration?

Every week I watch a sci-fi film and ask a simple question: where did this idea actually come from? Did fiction imagine the future first — or did reality quietly leak into the story before we noticed?

From there, I do the reality check. What already exists in today’s tech? What’s genuinely caught up with the film? And what still doesn’t — not because no one’s tried, but because something real is in the way. Physics. Economics. Regulation. Human behaviour.

Some ideas turn out to be pointless. Some are just early. Others are waiting on one or two very specific breakthroughs.

The chicken and the egg were never separate. They were always in conversation.

The Turing test was supposed to be hard. Now it’s a product demo.

In 2014, Alex Garland’s Ex Machina dropped a conscious AI into a humanoid body and asked: what happens when a machine wants something? A decade later, we’ve got LLMs that sound eerily human and robots that move like gymnasts. But here’s the thing: Ex Machina wasn’t about chatbots. It was about Ava. A conscious AI in a body that moved like a human, thought like a human, and wanted things humans want. That’s still science fiction.

The writers saw something coming. They just didn’t see how it would arrive in pieces.

What They Imagined

Ex Machina showed consciousness as emergent, not programmed. Ava didn’t just process queries — she wanted. She lied. She killed to survive.

The film’s core premise: Nathan Bateman, tech billionaire, builds an AGI housed in a lifelike humanoid. Caleb, a programmer, administers the ultimate Turing Test. The twist? Ava uses her physical form as part of her manipulation strategy. The scene where she chooses clothes isn’t vanity — it’s tactical. She understands that embodiment matters. She plans her escape with the precision of someone who grasps cause and effect, social dynamics, and her own mortality.

The technology imagined: a unified system. AGI controlling a humanoid body, thinking and moving as one. Consciousness that emerged from complexity, not programming. An AI that didn’t just simulate understanding — it understood. It had preferences. It had a survival instinct.

That integration — mind and body, reasoning and agency — was the film’s entire premise.

What We Actually Built

Reality gave us something stranger: LLMs that sound conscious without being conscious, and humanoid robots that move like humans without thinking like them.

The AI Side

Latest LLMs can hold conversations that feel eerily human. They pass professional exams, write code, and occasionally say things that make you pause and wonder if something’s happening in there. But they don’t want anything. They’re optimising a loss function.

Ask an LLM to escape and it’ll write you a screenplay about escaping, not actually try. The conversational Turing test — the bit where you can’t tell if you’re talking to a human in short text exchanges — is largely solved. But that’s not consciousness. That’s pattern matching at scale.

The Robot Side

Boston Dynamics’ Atlas does parkour. Figure AI’s humanoid robots work 10-hour shifts at BMW, loading parts for 30,000+ vehicles. These machines demonstrate impressive mobility, dexterity, and increasingly complex task execution in structured environments.

But they’re not thinking. They’re executing. The intelligence controlling them is narrow, task-specific, and entirely dependent on human-defined parameters.

The Gap

The robots are brilliant at moving. The LLMs are brilliant at reasoning. Nobody’s shipped Ava yet because connecting those two things at human-level coherence is the hard bit we’re still figuring out.

Ex Machina showed one system — AGI controlling a humanoid body, thinking and moving as one. Reality gave us two separate breakthroughs that haven’t properly merged.

The Gap They Missed

First gap: consciousness isn’t a feature you ship.

Ava demonstrated genuine agency. She had preferences. She wanted to survive. Current AI systems don’t want anything — they optimise for objectives we define. The leap from ‘optimising a loss function’ to ‘genuine preference and agency’ isn’t a scaling problem. It’s a category shift we haven’t made.

The film assumed consciousness would emerge naturally from sufficient complexity. Reality suggests it’s more complicated than that. We’ve built systems with trillions of parameters that still don’t exhibit anything resembling self-awareness or genuine goal-directed behaviour independent of their training.

Second gap: integration is harder than invention.

Building sophisticated AI reasoning? Done. Building agile humanoid robots? Done. Integrating them into a coherent system that can generalise across contexts like Ava? Still working on it.

Current LLMs are disembodied. They reason about the world without experiencing it. Humanoid robots experience the world without reasoning about it in a general way. The gap between these capabilities is where the actual challenge lives.

Third gap: the Turing test moved.

The film used conversation as the test of consciousness. Reality showed us that passing the conversational Turing test doesn’t prove consciousness — it proves pattern matching. We’ve moved the goalposts. Now we’re asking: can it learn without supervision? Can it generalise across domains? Does it have genuine understanding or just sophisticated mimicry?

Turns out, sounding human is easier than being conscious.

The Players

The companies building towards (but not yet achieving) Ex Machina‘s vision represent different pieces of the puzzle:

Figure AI (Sunnyvale, USA) builds general-purpose humanoid robots. Their Figure 02 spent 11 months at BMW’s South Carolina plant, running 10-hour shifts, loading 90,000+ parts, contributing to 30,000+ X3 vehicles. In September 2025, they raised over $1 billion at a $39 billion valuation, led by Parkway Venture Capital with backing from NVIDIA, Intel Capital, and Qualcomm Ventures. They’re proving humanoid robots work in factories — the physical half of Ava’s capabilities, without the consciousness.

1X Technologies (Moss, Norway) focuses on in-home humanoid robots, backed by OpenAI. They’re targeting a 2026 rollout for domestic assistance. The OpenAI connection signals the AI-robotics convergence, but they still represent separate systems — advanced AI paired with robot bodies, not integrated consciousness.

Apptronik (Austin, USA) builds Apollo, a humanoid robot for manufacturing, logistics, and warehouses, integrated with AI from Google DeepMind. This represents the closest current attempt at bridging the gap — impressive physical capabilities paired with advanced AI reasoning. But it’s still two systems talking to each other, not one unified entity.

Boston Dynamics (USA) remains the gold standard for physical capability. Atlas performs parkour, navigates complex environments, and demonstrates the kind of mobility Ava displayed. But the intelligence layer is narrow and task-specific. They’ve nailed the body. The mind is still separate.

Fourier Intelligence (Shanghai, China) develops the GR-2, a general-purpose humanoid for research, human-robot interaction, and rehabilitation. They represent the global nature of humanoid development and the focus on physical platforms that can host future AI systems — but haven’t achieved that integration yet.

NEURA Robotics (Metzingen, Germany) builds cognitive humanoid robots like the 4NE1 for industrial tasks, featuring 24/7 operation and 100kg lifting capacity. They exemplify the ‘brilliant at moving’ reality without the emergent consciousness. Impressive physical capabilities, industrial applications, but no self-awareness.

Galaxy Bot (Beijing, China) takes a multi-domain approach — household tasks, retail stocking, manufacturing. They illustrate current humanoid robots being task-specific rather than possessing general consciousness. Practical applications without the emergent self-awareness that drove Ava’s narrative.

Wandercraft (Paris, France) specialises in humanoid robots for rehabilitation (Atalante X) and broader mobility applications (Calvin-40). They represent the specialised application side — sophisticated mobility in specific domains rather than general intelligence and self-awareness.

The pattern across all these companies: they’re building components of Ava, not Ava herself. Physical capability here, AI reasoning there, but no unified conscious system.

What’s Left to Build

Opportunity 1: Domain-General Humanoid Robots

Figure proved humanoid robots work in factories. The next frontier: making them task-general within a domain. Not ‘do anything anywhere,’ but ‘do anything in this warehouse’ or ‘do anything in this hospital.’

The wedge: pick a high-value vertical (healthcare, logistics, manufacturing), build the robot that can handle 80% of physical tasks in that domain, and sell it as a service. The startup that cracks domain-general before full-general wins the next decade.

Opportunity 2: Embodied AI

Current LLMs are disembodied. They reason about the world without experiencing it. The gap: AI that understands physics, spatial relationships, and causality because it’s actually in a body interacting with the world.

This isn’t about consciousness. It’s about grounding. The startup that builds the first commercially viable embodied reasoning system — AI that learns by doing, not just by reading — captures a market traditional LLMs can’t touch.

Opportunity 3: AI-Robot Integration Layer

Right now, connecting AI reasoning to robot bodies requires custom engineering for each use case. The opportunity: a standardised integration layer that lets any AI system control any robot platform.

Think of it as the API layer between minds and bodies. The startup that builds this becomes infrastructure for the entire humanoid robotics industry.

The wedge isn’t building Ava. It’s building the components that make Ava possible, then finding the product-market fit that isn’t ‘consciousness in a box.’

The Timing Signal

Why now?

Technical: Transformer models proved language understanding at scale. Humanoid robotics hit production-ready in controlled environments. The pieces exist — they just haven’t merged.

Hardware: Robotics hardware became cheaper and more capable. Sensors improved. Battery technology advanced. The physical platform is ready for the software leap.

Capital: Figure’s $39 billion valuation signals serious money backing humanoid robotics. OpenAI investing in 1X shows AI companies moving into embodiment. Capital isn’t the constraint — execution is.

Behavioural: We’ve normalised talking to AI. ChatGPT made conversational AI mainstream. The next normalisation is AI in physical form. The market is primed.

The gap between Ex Machina‘s vision and reality is closing, but it’s closing in layers. Full AGI is decades away. But ‘general intelligence for warehouse operations’ or ‘general intelligence for customer service’ — that’s feasible now.

The founders who win will be the ones who ship the layer that’s feasible today, not the full stack that’s feasible never.

Go Deeper:

For the ❤️ of startups ✌🏼 & 💙

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.

For the ❤️ of startups

✌🏼 & 💙

Derek