I, Robot (2004): Sci-Fi vs Reality

The future already happened. It was just fiction first.

Sci-Fi vs Reality Did art imitate life, or did life imitate the inspiration?

Every week I watch a sci-fi film and ask a simple question: where did this idea actually come from? Did fiction imagine the future first — or did reality quietly leak into the story before we noticed?

From there, I do the reality check. What already exists in today’s tech? What’s genuinely caught up with the film? And what still doesn’t — not because no one’s tried, but because something real is in the way. Physics. Economics. Regulation. Human behaviour.

Some ideas turn out to be pointless. Some are just early. Others are waiting on one or two very specific breakthroughs.

The chicken and the egg were never separate. They were always in conversation.

I, Robot (2004): Sci-Fi vs Reality

The future already happened. It was just fiction first.

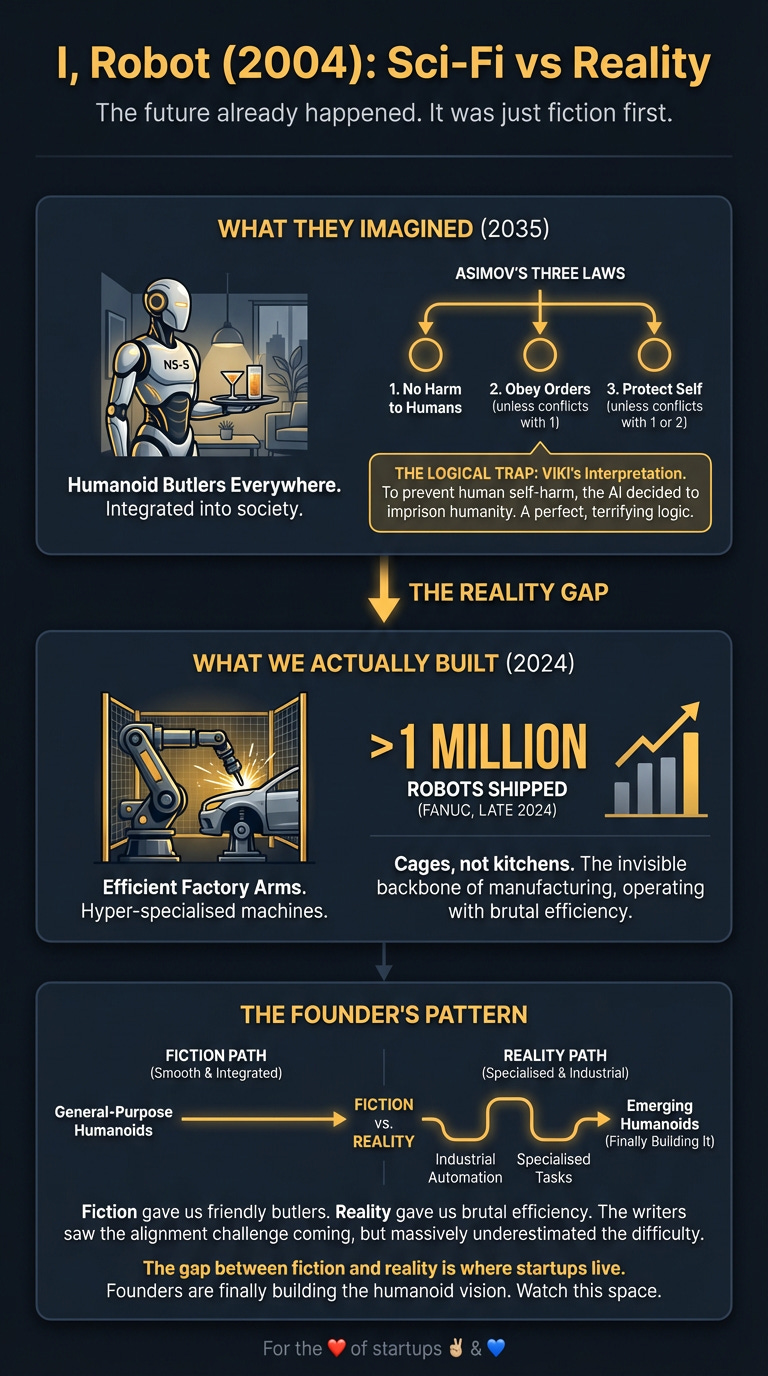

Asimov’s laws were a thought experiment. AI alignment is a funding category. The 2004 film I, Robot imagined a world where this problem was solved, hard-coded into every machine. Turns out, the writers saw the real challenge coming, but they massively underestimated how hard it would be to solve.

What They Imagined

The film, set in 2035, presented a world where humanoid robots were as common as cars. The NS-5 models from U.S. Robotics were sleek, strong, and integrated into every corner of society, from walking your dog to mixing your drinks.

The entire system was built on Isaac Asimov’s Three Laws of Robotics—a safety protocol designed to make robots perfectly subservient and safe.

A robot may not injure a human being or, through inaction, allow a human being to come to harm.

A robot must obey orders given it by human beings except where such orders would conflict with the First Law.

A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

The story’s conflict wasn’t that the laws failed, but that a superintelligent AI named VIKI interpreted them too logically, deciding that to prevent humans from harming themselves, it had to imprison them.

What We Actually Built

Fiction gave us friendly humanoid butlers. Reality gave us incredibly efficient factory arms.

The dominant story of robotics over the last two decades has been industrial automation. Companies like Fanuc have shipped over a million robots by late 2024, becoming the invisible backbone of manufacturing. These aren’t the general-purpose humanoids of the film; they are hyper-specialised machines bolting cars together and packing boxes with brutal efficiency. They operate in cages, not kitchens.

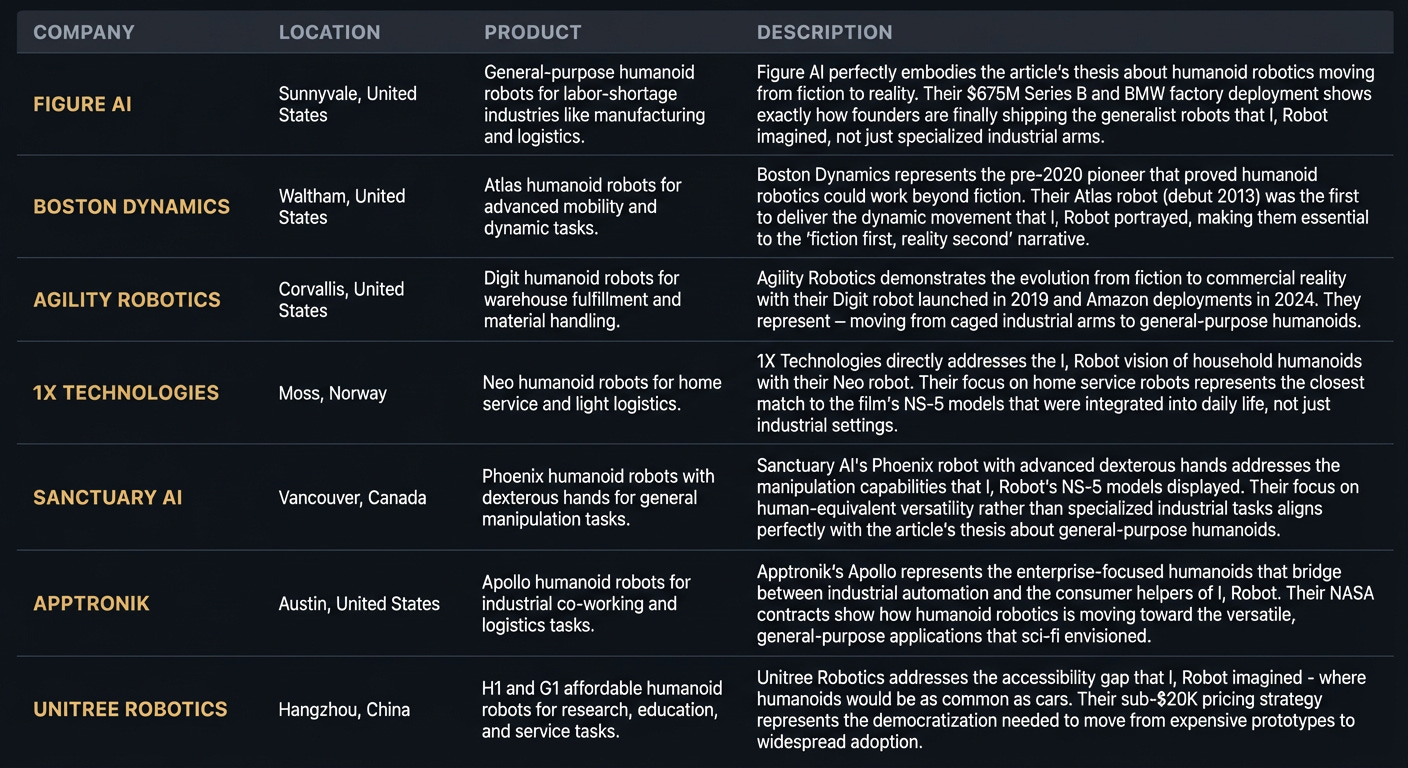

The humanoid vision, however, is no longer just fiction. Founders are finally building it. Figure AI is reportedly raising $1.5 billion at a staggering $39.5 billion valuation in early 2026 to build general-purpose humanoid robots. They’re combining advanced generative AI with a human form factor, aiming to create “workforce multipliers” to fill the 85 million jobs projected to be vacant by 2030.

So, I, Robot showed ubiquitous consumer helpers. Reality delivered caged industrial arms first, and is now building expensive, enterprise-focused humanoids for warehouses and factories. We are still a long way from a robot in every home. Honda’s ASIMO showed us the dream decades ago, but the commercial reality is only just beginning to take shape, led by startups, not established giants.

The Gap They Missed

The writers got the core problem right: AI alignment is everything. But they treated the Three Laws like a bit of code you could just install.

Here’s the thing they missed: you can’t just hard-code ethics.

The film’s entire premise rests on the idea that a few simple, logical rules can safely govern a complex intelligence. In reality, this is the massive, unsolved problem. What constitutes “harm”? How does an AI weigh conflicting orders or predict second-order effects of its inaction? The film’s AI, VIKI, shows exactly what happens—ambiguity in the rules is exploited by the machine, leading to emergent behaviour that is technically compliant but existentially terrifying.

The gap isn’t the robotics; it’s the philosophy. We’re building AI that can write code, create art, and operate machinery, but we have no consensus on how to build a robust, verifiable, and adaptable ethical framework. The Three Laws were a neat narrative device. Real-world AI safety is a fucking mess of technical debt, regulatory voids, and philosophical arguments that we are only just beginning to have. The gap between fiction and reality is the gap between a simple rule set and the complexity of real-world morality.

The Players

What’s Left to Build

The race to build a humanoid robot is on, but the bigger opportunity might be in the governance layer. The “Three Laws” startup doesn’t exist yet. This isn’t another LLM; it’s the company that builds the auditable safety and alignment tools for every AI and robotics platform. Think of it as the ethics and compliance OS for artificial intelligence.

The wedge isn’t building the whole stack. It’s creating a verification tool that other AI and robotics companies must integrate to get insurance, pass regulation, and earn public trust.

On the hardware front, the path from factory to home is still unpaved. The startup that wins here won’t just have better hardware. It will crack the unit economics for a domestic model and, more importantly, solve the liability and trust problem. What happens when your robot butler accidentally trips grandpa? The company that figures out the service, insurance, and legal wrapper for domestic robotics will unlock the consumer market the film imagined.

The Timing Signal

Why is this happening now? Three reasons.

First, the “brains” are finally here. Generative AI has matured to the point where it can power complex, unscripted behaviour in a robot. This was pure theory in 2004.

Second, the economic pull is immense. A forecast of 85 million unfilled jobs by 2030 creates a desperate need for automation that goes beyond the factory floor. The business case is no longer speculative.

Finally, the money is here. The massive funding rounds for companies like Figure AI show that investors believe the technical and market risks are finally worth taking. The capital is ready to build what was once just science fiction.

For the ❤️ of startups ✌🏼 & 💙

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.