The rules changed. Here’s the new playbook. 30 principles for building startups in an AI world — what worked before, what broke, and what founders need to do now. Not theory. Tactics that ship.

New principle every Monday

Know Your Models

Choosing the wrong AI model is now a product and cost architecture mistake. Founders need to match model capability to task rather than defaulting to the most powerful or cheapest option. You were told that deep technical expertise in your stack was non-negotiable. Turns out, the stack is now a menu, and your most important technical skill is knowing what to order.

Why This Mattered Before

The conventional wisdom was that a technical founder must deeply understand their core technology. And it was right. When you built everything from scratch, from the database to the front-end framework, that deep knowledge was the difference between shipping and stalling. Knowing the internals of your stack was how you optimised performance, debugged esoteric issues, and ultimately, built a defensible product.

Your unique advantage was in how you put the bricks together because you knew the properties of every single brick. This was the gospel of YC, the definition of a “great hacker,” and for a decade, it was the only way to win.

The Graveyard

I haven’t seen a single, high-profile startup death with “built their own model” listed as the official cause on the tombstone. The reality is more subtle and far more common. In the cohorts I’ve worked with, I’ve watched brilliant teams burn their first £500k and nine months of runway trying to build a bespoke NLP model for something like sentiment analysis or document summarisation.

They’d be heads down, trying to eke out a 2% accuracy gain, convinced this was their moat. Then a new foundation model from Anthropic or Google would drop, delivering better performance out of the box through a simple API call. The startup’s “moat” became a millstone overnight — expensive to maintain, slow to improve, and fundamentally uncompetitive. They didn’t die in a blaze of glory; they just became irrelevant.

What AI Actually Changed

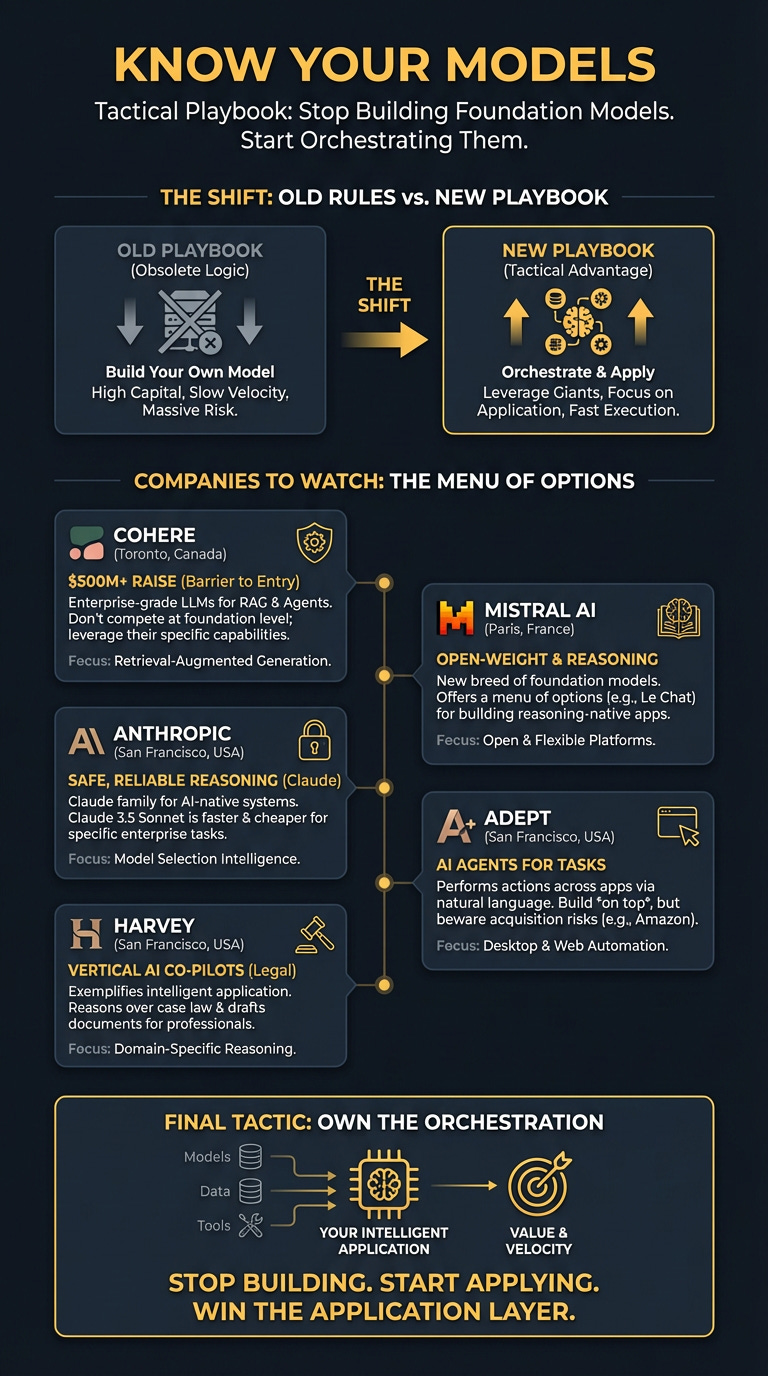

The foundational technical competence has shifted from building the engine to knowing how to drive. You no longer need to know the internals of PostgreSQL. You need to know that Claude 3.5 Sonnet is faster and cheaper for enterprise-grade text analysis, while GPT-4o might be better for complex, multi-step agentic workflows. This is the new literacy.

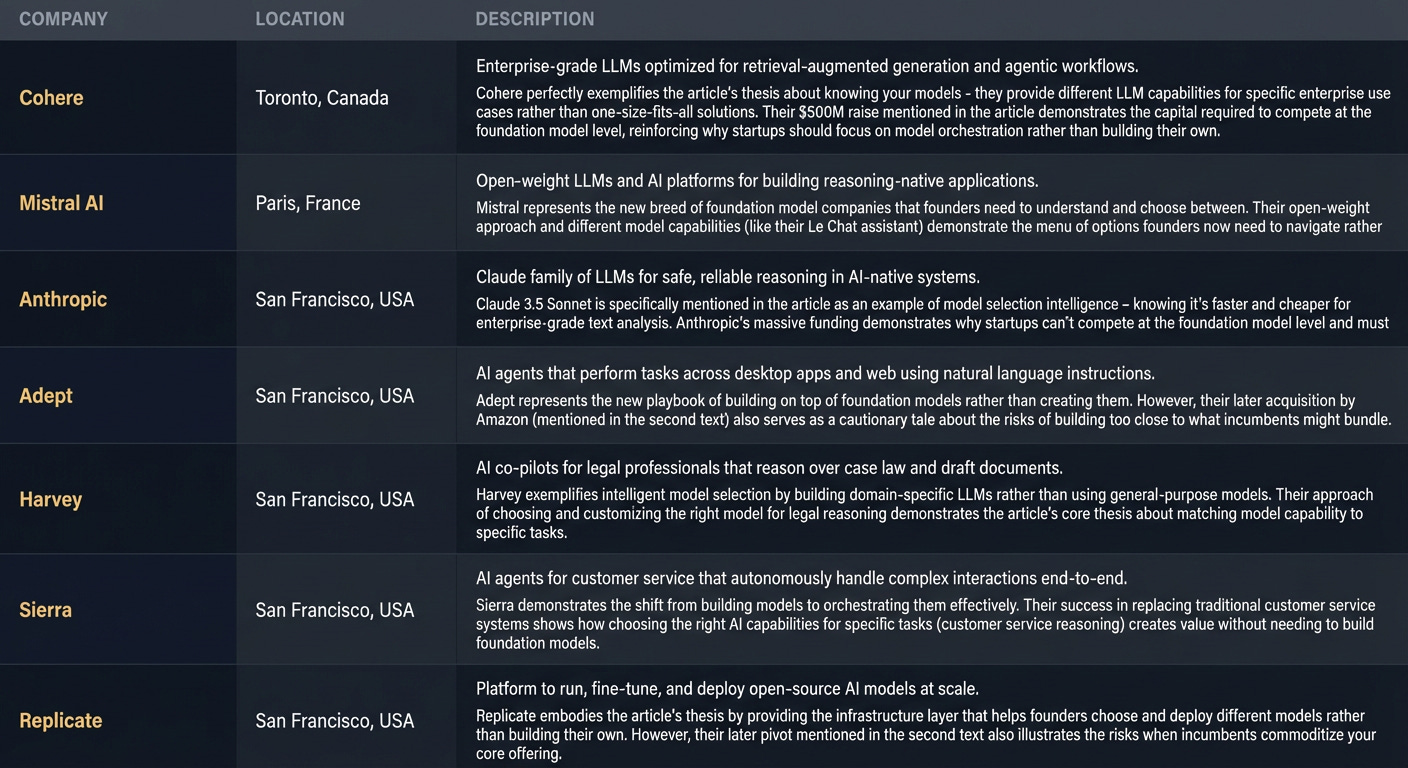

The game is no longer about building your own model from scratch. The capital required is staggering. Cohere raised $500 million in 2025 to focus on enterprise models. xAI is backed by billions. You cannot compete with that. What they’ve done is commoditise raw capability. Your job is no longer to create the capability, but to intelligently apply it. The core engineering challenge has moved up the stack from model creation to model orchestration.

The New Playbook

Audit tasks, not your tech. Map every core task your product performs to the required AI capability. Does this task need world-class reasoning, or just fast, accurate classification? Does it need creative generation or factual extraction? Don’t use a sledgehammer to crack a nut. Use a small, fast model for simple jobs and save the expensive, powerful models for the tasks that truly need them.

Build a model “palette”. Don’t get locked into a single provider. Your architecture should allow you to route tasks to different models based on performance and cost. Set up a simple evaluation framework to continuously benchmark models like those from OpenAI, Anthropic, Google, and open-source alternatives on Hugging Face. A simple API call change could cut your COGS in half or double your performance overnight.

Treat cost-per-token as a core business metric. Your model choice directly impacts your cost of goods sold, and therefore your pricing and margin. A model that’s 98% as good but 80% cheaper might be the right business decision, even if it’s not the absolute peak of technical performance. This is no longer just an engineering choice; it’s a fundamental product and finance decision.

Hire for AI literacy, not ML research. Your first key hire isn’t a PhD who wants to build a model from the ground up. It’s a pragmatic engineer who lives on the leaderboards, understands the trade-offs between different models, and can quickly integrate and fine-tune them. They’re an architect and a plumber, not a research scientist.

The Warnings

First, avoid the “Sledgehammer Fallacy.” I see founders using the most powerful model on the market for every single API call, from parsing a simple user query to generating a button colour. This burns cash and introduces unnecessary latency. It’s lazy architecture, and it will kill your margins.

Second, beware of “Model Monogamy.” Committing your entire product to a single AI provider is a huge strategic risk. When they change their pricing, deprecate a model you rely on, or suffer a major outage, your business is at their mercy. Diversify your model dependencies just like you would any other critical part of your supply chain.

The Bottom Line

Your first AI hire isn’t a researcher. It’s an architect who knows which model to use, when, and precisely what it costs.

Part of Startup Principles for an AI World — 30 principles for building in the new era. New issue every week.

Arthur is the AI native startup operating system I’m building in public — not hype, but a system that turns input into structured execution and tracks founder progress. If you want to follow or use it, it’s open for early access.

→ Access Arthur

Enjoy and hope to see you soon.

❤️ & ✌🏼

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.