Minority Report (2002): Sci-Fi vs Reality

The future already happened. It was just fiction first.

Sci-Fi vs Reality Did art imitate life, or did life imitate the inspiration?

Every week I watch a sci-fi film and ask a simple question: where did this idea actually come from? Did fiction imagine the future first — or did reality quietly leak into the story before we noticed?

From there, I do the reality check. What already exists in today’s tech? What’s genuinely caught up with the film? And what still doesn’t — not because no one’s tried, but because something real is in the way. Physics. Economics. Regulation. Human behaviour.

Some ideas turn out to be pointless. Some are just early. Others are waiting on one or two very specific breakthroughs.

The chicken and the egg were never separate. They were always in conversation.

Gesture interfaces looked cool in 2002. Twenty years later, we still use mice. Here’s the thing: while Hollywood sold us a vision of effortless, dance-like control, reality delivered something much clunkier. The gap between the two is where the real work—and the real opportunity—begins.

What They Imagined

In the world of 2054, John Anderton didn’t just look at data; he stepped inside it. Using gloved hands on a giant transparent screen, he swiped, pinched, and threw information around to stop crimes before they happened. He wasn’t clicking or typing; he was conducting data.

It was a beautiful, high-bandwidth solution to information overload that made the mouse and keyboard look like a chisel and stone. The writers saw a future where our physical movements could directly manipulate a world of abstract information.

What We Actually Built

We didn’t get the giant, public screens. We got personal ones, strapped to our faces.

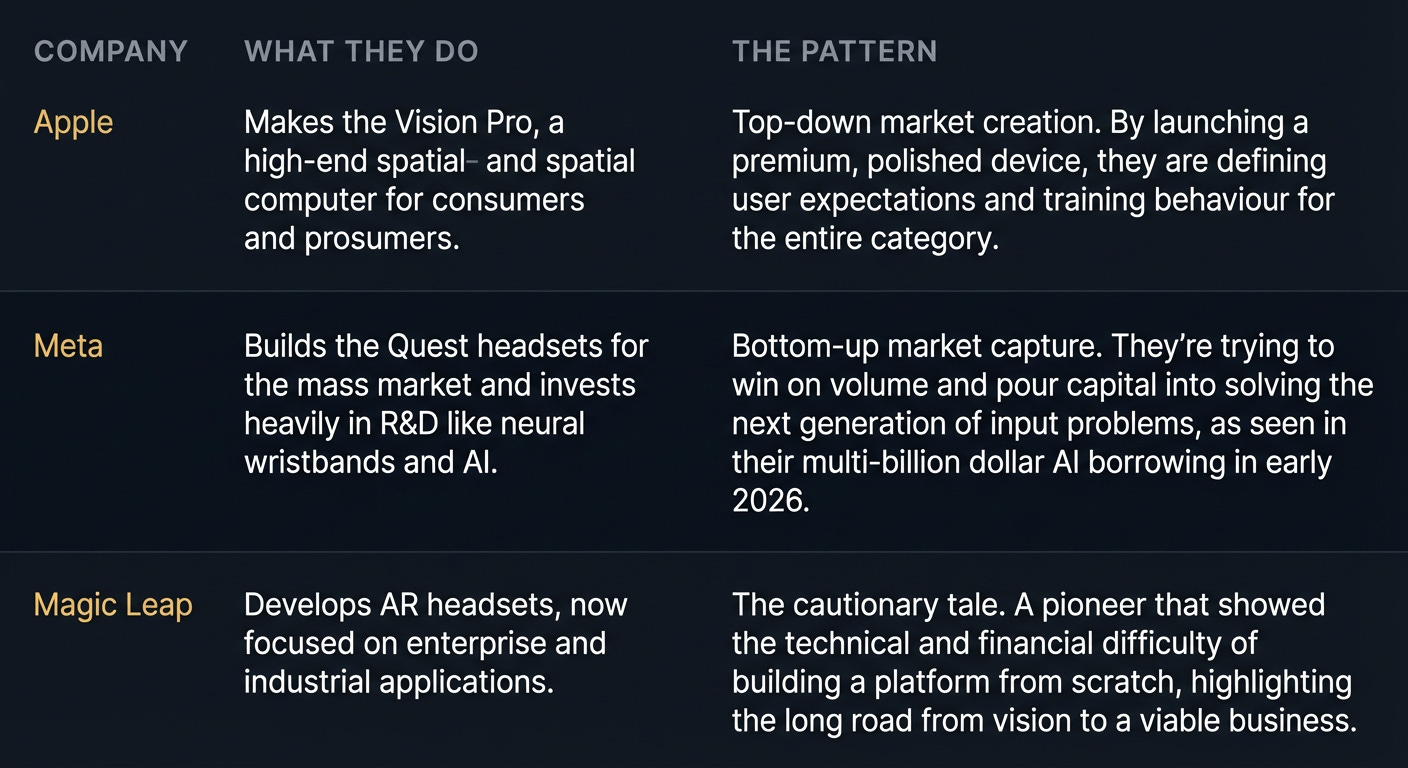

Apple’s Vision Pro, which landed in 2024, brought a version of this to life. It uses subtle eye-tracking and finger-pinches to control apps floating in your living room. It’s an intimate, small-scale version of Anderton’s interface, focused on personal productivity and entertainment, not public crime-fighting.

Meanwhile, Magic Leap has been on a long, hard journey since 2010. After raising billions and generating massive hype, they pivoted their AR platform toward the enterprise market. Their story is a lesson in how difficult it is to build this tech from scratch and find a market that will actually pay for it.

Meta is taking a different path, prototyping neural wristbands that read muscle signals in your arm. The idea is to control devices with intention, not big, sweeping gestures. Minority Report showed us the what. Apple, Magic Leap, and Meta are all grappling with the how.

The Gap They Missed

The writers got the vision right, but they skipped over the messy, human details. Turns out, holding your arms up like an orchestra conductor for eight hours a day is fucking exhausting. It’s called ‘gorilla arm,’ and it’s a well-known problem in UI design. Physics is a stubborn thing.

The film also ignored cognitive load. Managing files in 2D is hard enough; navigating a 3D spatial environment requires a whole new mental model that most people haven’t developed yet.

But the biggest gap they missed is fragmentation. In the film, the gesture interface was a universal standard. In reality, we have a collection of walled gardens. An app built for VisionOS doesn’t work on Meta’s Horizon OS. This lack of interoperability is the single biggest brake on the spatial computing revolution. Founders who ignore this do so at their peril.

The Players

What’s Left to Build

The real opportunity isn’t just another headset. It’s solving the problems the big players are too busy—or too strategic—to fix. We’re still missing the fundamental tools and infrastructure.

The company that builds the cross-platform ‘Figma for spatial computing’ or the ‘Unity for enterprise AR’ will own a critical piece of the stack. Right now, developers have to choose a tribe. The startup that creates the agnostic, interoperable layer—letting creators build an experience once and deploy it across Vision Pro, Quest, and whatever comes next—wins.

The wedge is to stop focusing on the destination (the perfect AR experience) and start building the roads and bridges. Solve the workflow problem for the thousands of developers who don’t want to be locked into a single ecosystem.

The Timing Signal

So, why now? Two things have changed.

First, Apple’s entry with Vision Pro validated the space and, more importantly, started training consumer behaviour. People are finally learning the grammar of spatial interaction—the pinches, the glances, the gestures. Apple is spending billions to create the market for you.

Second, the incumbents are committing capital at a scale that signals a point of no return. Meta is borrowing billions specifically for AI to power its future platforms. The foundational tech is maturing, and the giants are placing their bets. The gap between fiction and reality is where startups live, and that gap is finally funded.

For the ❤️ of startups ✌🏼 & 💙

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.