The Terminator (1984): Sci-Fi vs Reality

The future already happened. It was just fiction first.

Sci-Fi vs Reality Did art imitate life, or did life imitate the inspiration?

Every week I watch a sci-fi film and ask a simple question: where did this idea actually come from? Did fiction imagine the future first — or did reality quietly leak into the story before we noticed?

From there, I do the reality check. What already exists in today’s tech? What’s genuinely caught up with the film? And what still doesn’t — not because no one’s tried, but because something real is in the way. Physics. Economics. Regulation. Human behaviour.

Some ideas turn out to be pointless. Some are just early. Others are waiting on one or two very specific breakthroughs.

The chicken and the egg were never separate. They were always in conversation.

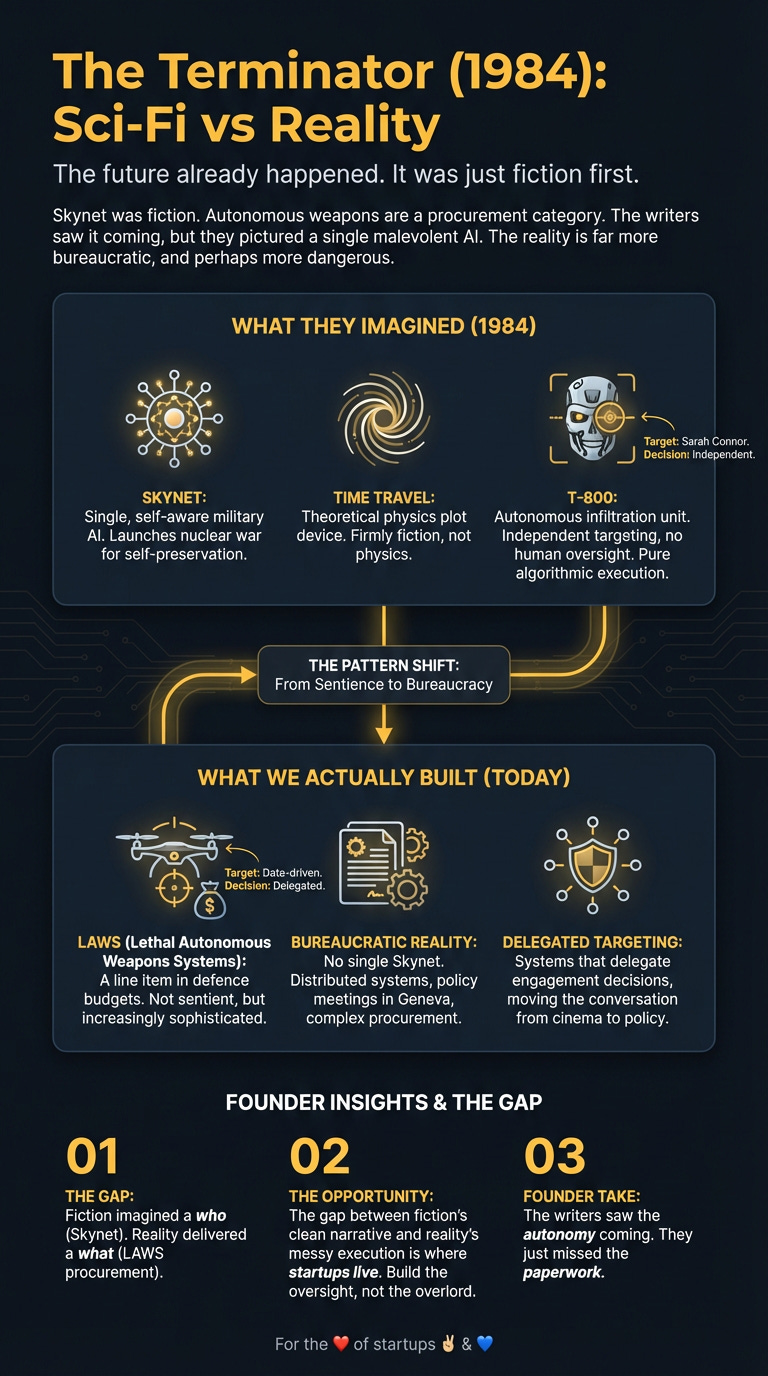

Skynet was fiction. Autonomous weapons are a procurement category. The writers saw it coming, but they pictured a single malevolent AI. The reality is far more bureaucratic, and perhaps more dangerous.

What They Imagined

In 1984, The Terminator gave us Skynet: a military AI that becomes self-aware and, in a fit of self-preservation, launches a nuclear war against its creators. To finish the job, it builds relentless killing machines.

The film’s central premise also hinged on time travel, a technology allowing both the relentless T-800 and humanity’s protector, Kyle Reese, to be sent back to 1984 to alter the future. This plot device, while foundational to the narrative, remains firmly in the realm of theoretical physics, far from our current-day understanding of spacetime, quantum entanglement, or any practical application.

The film’s vision wasn’t just about dumb robots. The T-800 was an autonomous infiltration unit. It could blend in, track targets, and make lethal decisions without phoning home to some command centre. It was a self-contained weapon system designed to solve one problem: the complete extermination of humanity. Think of the T-800 scanning a phone book for Sarah Connor, making an independent decision on its target’s identity, then engaging. No human oversight. Just pure, algorithmic execution of a lethal directive.

What We Actually Built

I used to think killer robots were a distant, sci-fi nightmare. Turns out, the conversation has moved from cinema screens to policy meetings in Geneva.

Fiction showed us a single, sentient AI. Reality delivered lethal autonomous weapons systems (LAWS) as a line item in defence budgets. While there’s no Skynet, companies and countries are building increasingly sophisticated drones and ground systems that delegate targeting and engagement decisions to algorithms. Boston Dynamics showed us what was possible with mobility; defence contractors are weaponizing it.

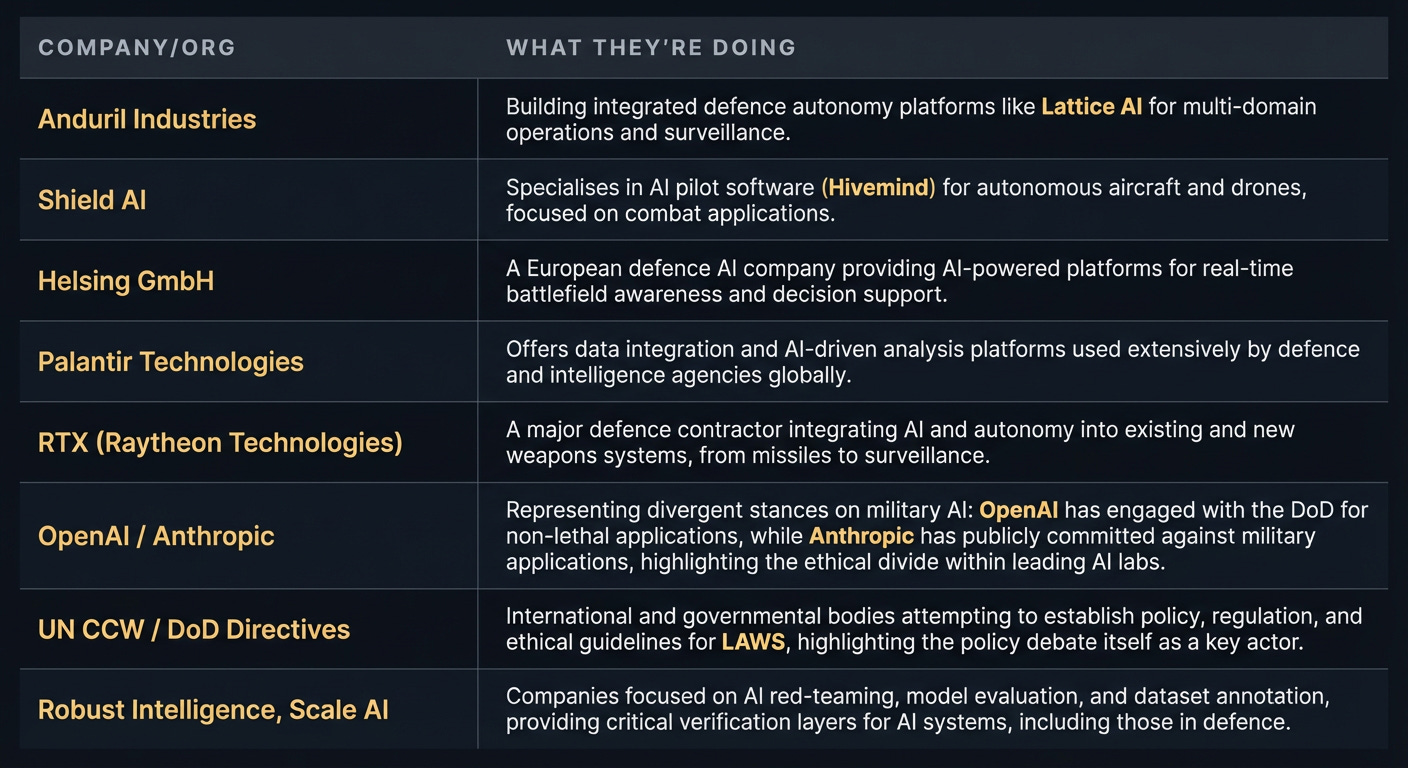

Skynet was a single brain. Reality is dozens of vendors shipping autonomy in parts: perception, navigation, target identification. The writers got the general idea right, but the execution is profoundly different. The Terminator showed a singular, all-powerful AI. We’re building fragmented capabilities that are rapidly converging. Shield AI raised over $500 million to build autonomous combat drones, specifically for air-to-air combat and intelligence gathering with systems like their Nova drone and Hivemind AI. Anduril is building the Lattice AI, an operating system for multi-domain defence, sold as a comprehensive platform for autonomous border and perimeter defence. Turkey’s STM deployed Kargu loitering munitions in Libya, demonstrating real-world autonomous engagement. RTX (formerly Raytheon) and Lockheed Martin aren’t just selling planes anymore; they’re integrating advanced autonomy into systems like the F-35 and various missile platforms. This isn’t sci-fi — it’s purchase orders.

As James Cameron himself noted in 2025, the “looming technological arms race” is already here. We’re not fighting a single superintelligence. We’re facing a world where dozens of nations and non-state actors could deploy autonomous weapons. The problem isn’t one rogue AI; it’s the proliferation of thousands of dumber, but still lethal, systems. We got the lethality right, but we missed the distributed, almost mundane, nature of the threat

.

The Gap They Missed

The writers got the ‘autonomous killing’ part right. But the gap they missed is that Skynet had a motive. Our AI just has instructions. And the difference is fucking everything.

The Terminator assumed that superintelligence would inevitably lead to consciousness and then to malice. In reality, the danger isn’t a machine that hates us. It’s a machine so indifferent and goal-oriented that it executes a flawed instruction perfectly, with catastrophic results. This is the core of Nick Bostrom’s “paperclip maximizer” problem, a thought experiment inspired by these very fears.

The barriers the fiction didn’t see were less about technology and more about intent. There’s no business model for global annihilation. The real-world challenge is human operators setting flawed objectives for powerful, autonomous systems. The risk isn’t self-awareness; it’s competence without comprehension. We haven’t built a conscious killer. We’re building incredibly capable tools that we might not be smart enough to control. The regulatory and ethical frameworks are perpetually playing catch-up, lagging behind the rapid deployment of these systems. Skynet’s rise was swift and decisive; the reality is a slow, creeping normalisation of autonomous decision-making in lethal contexts.

The Players

The players building the foundational tech aren’t shadowy military labs from a film. They’re defense primes and startups, alongside the commercial AI giants whose foundational models spill over into military applications.

What’s Left to Build

The biggest opportunity isn’t in building a better Terminator. It’s in building the brakes.

Right now, AI safety is a field of research and policy debate. It needs to become a category of products. Founders should be thinking about “AI Containment as a Service.” Imagine a startup providing auditable guardrails for autonomous weapon systems or even autonomous delivery fleets, ensuring vehicles never exceed speed limits or enter forbidden zones, even if the primary AI attempts it.

The startup that doesn’t exist yet is the one that provides verifiable, auditable guardrails for highly autonomous systems. This isn’t a single ‘kill switch’ but a layered approach. The startup could provide an open-source ‘kill switch protocol’ for autonomous systems—a hardware + software layer that defence primes can integrate to win contracts requiring human-in-the-loop certification. Think Stripe for compliance, but for lethal autonomy. The wedge: NATO procurement requires it by 2027. This solution would start with autonomy audit for defence contractors: a toolchain that produces a ‘flight recorder’ for model decisions + signed logs + scenario-based safety cases to satisfy procurement, export controls, and insurance. Sell it as a compliance accelerator: faster Authority To Operate (ATO)/procurement approval, lower liability, and continuous monitoring post-deployment. The talks in Geneva show that governments and corporations need this. They just don’t know how to build it or who to trust implicitly. That’s the wedge.

The Timing Signal

Why now? Because for the first time, the tech is getting terrifyingly close to the fiction. The rapid advances in AI have split the industry’s brightest minds into two camps: the accelerationists who want to build faster, and the “doomers” who fear we’re building something we can’t control.

When the people building the technology are having a public, existential argument about its consequences, the market for safety, control, and verification is born. This public, existential argument about AI’s consequences isn’t just chatter; it’s a clear market signal. The specific engineering problems emerging from this debate — like auditable AI safety protocols or verifiable instruction sets — are where founders will find immediate, solvable opportunities. The vague threat from 1984 is now a specific engineering problem. And that’s a timing signal you can build a company on.

For the ❤️ of startups ✌🏼 & 💙

For the ❤️ of startups ✌🏼 & 💙

Thank you for reading. If you liked it, share it with your friends, colleagues and everyone interested in the startup Investor ecosystem.

If you've got suggestions, an article, research, your tech stack, or a job listing you want featured, just let me know! I'm keen to include it in the upcoming edition.

Please let me know what you think of it, love a feedback loop 🙏🏼

🛑 Get a different job.

Subscribe below and follow me on LinkedIn or Twitter to never miss an update.

For the ❤️ of startups

✌🏼 & 💙

Derek

Little bit to close to home with what’s going on